Cloudflare Unveils General Availability of Sandboxes and Cloudflare Containers, Revolutionizing AI Agent Workloads

Cloudflare has announced the general availability of its Sandboxes and Cloudflare Containers, a significant development unveiled during the company’s "Agents Week." This release introduces persistent, isolated Linux environments meticulously engineered for the demanding and evolving landscape of AI agent workloads. The strategic move aims to provide developers with robust, secure, and highly scalable infrastructure essential for building and deploying advanced AI applications.

The announcement marks a pivotal moment in Cloudflare’s expanding commitment to the artificial intelligence ecosystem, building upon a beta launch in June. The general availability version significantly enhances the platform with a suite of critical features, including secure credential injection, pseudo-terminal (PTY) support for interactive sessions, persistent code interpreters, real-time filesystem watching, snapshot-based session recovery, and an innovative active CPU pricing model that charges only for actual compute cycles consumed. According to Kate Reznykova and Mike Nomitch from the Cloudflare team, these advancements transform the Sandbox from a mere container into a comprehensive development environment, offering capabilities like a browser-connectable terminal, stateful code interpretation, background processes with live preview URLs, and a dynamic filesystem that emits change events in real time. Egress proxies for secure credential injection and a snapshot mechanism for nearly instant warm starts further solidify its position as a leading-edge solution.

The Evolution of AI Agent Infrastructure

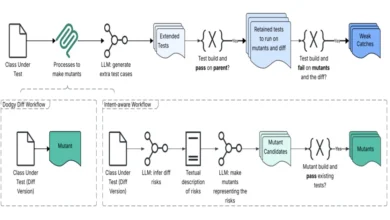

The proliferation of AI agents – autonomous software entities capable of understanding goals, planning actions, and executing tasks – has rapidly shifted the demands on underlying infrastructure. Traditional serverless functions or virtual machines, while powerful, often fall short of the specific requirements posed by these sophisticated agents. AI agents frequently require persistent state across interactions, secure access to external resources, the ability to run diverse tools and languages, and a development environment that mirrors a full operating system. These needs have spurred a new wave of infrastructure innovation, focusing on providing secure, reproducible, and scalable execution environments.

Before the advent of specialized solutions like Cloudflare’s Sandboxes, developers building AI agents often contended with significant challenges. Managing state across multiple invocations of an agent, ensuring the security of sensitive credentials when agents interact with external APIs, providing a rich development environment that allows agents to compile code or run background processes, and optimizing costs for often bursty and unpredictable workloads were common hurdles. The initial beta of Cloudflare’s Sandbox, launched in June, represented an early foray into addressing these issues, providing a basic containerized environment. However, the general availability release signifies a maturity that directly tackles these complexities head-on, offering a more complete and production-ready solution.

Key Innovations in General Availability

Cloudflare’s GA release is not merely an incremental update; it represents a fundamental rethinking of how AI agents interact with their execution environments. The core concept of a Cloudflare Sandbox revolves around a container that initiates on demand, automatically sleeps when idle, and reactivates upon receiving a new request. This "serverless container" model ensures efficiency and responsiveness. Critically, the same sandbox remains accessible from any location via a consistent ID, providing agents with a truly stateful environment that endures across multiple interactions, a capability vital for complex, multi-step agent workflows. The accompanying SDK, accessible through a TypeScript API, empowers developers with methods for executing commands, cloning repositories, managing files, and controlling processes within these isolated environments.

-

Enhanced Security for Untrusted Workloads: Security is paramount when dealing with AI agents, particularly those that interact with external services or handle sensitive data. The GA release introduces a robust security framework, primarily centered around outbound Workers. These act as programmable egress proxies, intercepting all outbound requests originating from the sandbox and injecting credentials at the network layer. This architecture ensures that the AI agent itself never directly accesses or "sees" the sensitive tokens. Developers gain the flexibility to craft custom authentication logic for specific destination domains, enforce identity-aware policies per sandbox, and dynamically restrict network access as a task progresses. Cloudflare champions this as a zero-trust model, where no token is ever granted to the untrusted workload, significantly mitigating the risk of credential compromise. This approach is a critical differentiator, offering peace of mind for enterprises deploying agents that need to access internal systems or third-party APIs.

-

Streamlined Developer Experience: Recognizing that the efficacy of any platform hinges on developer productivity, Cloudflare has invested heavily in enhancing the developer experience. The introduction of PTY (pseudo-terminal) support fundamentally transforms agent interaction. Previous agent systems often relied on request-response shell simulations, which could feel clunky and non-interactive. PTY support replaces this with real pseudo-terminal sessions proxied over WebSockets, offering a genuine, interactive command-line experience. This allows agents to run shell commands, interact with processes, and observe output much like a human developer would in a traditional terminal.

Furthermore, persistent code interpreters maintain state across execution calls, mimicking the behavior of environments like Jupyter notebooks. Variables and imports survive between steps, enabling agents to build upon previous computations without re-initializing, which is crucial for iterative development and complex reasoning tasks. Background processes with live preview URLs allow agents to initiate development servers or other long-running tasks and then share a working link, facilitating real-time feedback and collaboration. The inclusion of filesystem watching, built on Linux’sinotifymechanism, empowers agents to react instantaneously to file changes, enabling dynamic workflows such as recompiling code or triggering further actions based on data modifications. -

Optimized Performance and Efficiency: Performance and cost-efficiency are critical considerations for scalable AI infrastructure. Cloudflare addresses these through snapshots and its innovative pricing model. Snapshots, slated for roll-out in the coming weeks, will preserve a container’s entire disk state, allowing for near-instant restoration. This capability unlocks powerful new patterns for agent development, such as forking sessions. Agents can boot multiple sandboxes from the same snapshot simultaneously, enabling parallel exploration of different approaches or hypotheses. Cloudflare illustrates the dramatic impact: cloning a repository, running

npm install, and booting from scratch might take 30 seconds, while restoring from a backup takes a mere two seconds. This substantial reduction in startup time is invaluable for rapid experimentation and scaling.

Architectural Differentiators: Edge and Two-Tier Approach

What truly sets Cloudflare’s offering apart in an increasingly crowded market is its inherent edge distribution across its vast global network. Leveraging its existing infrastructure, Cloudflare can provision Sandboxes closer to the data sources or end-users, significantly reducing latency and improving performance for geographically dispersed AI agent workloads. This edge-native approach ensures that agents can execute with minimal delay, a critical factor for real-time applications or those requiring rapid iteration.

Complementing this edge distribution is Cloudflare’s unique two-tier architecture. This strategy combines lightweight, V8 isolate-based Dynamic Workers (which also entered open beta during Agents Week) for ephemeral code execution with the full container-based Sandboxes. Dynamic Workers are ideal for short-lived, high-throughput tasks that don’t require a complete operating system. In contrast, Sandboxes provide a comprehensive environment for agents that necessitate a full Linux operating system, including tools like Git, Bash, development servers, and multi-language build capabilities. This allows developers to choose the right tool for the job: Dynamic Workers for simple, quick computations, and Sandboxes for complex, stateful, and resource-intensive agent tasks. This flexible architecture ensures optimal resource utilization and cost-efficiency across a spectrum of AI agent needs.

Competitive Landscape and Market Positioning

The AI agent sandbox space has indeed become a hotbed of innovation, with several formidable players vying for market share. Each competitor brings a distinct approach to the table, highlighting the diverse needs within the AI development community:

- E2B: This platform utilizes Firecracker microVMs, providing dedicated kernels per session, emphasizing strong isolation and security. E2B reports significant adoption, including by a substantial portion of Fortune 500 companies, underscoring its appeal for enterprise-grade, highly secure workloads. Their focus on microVMs offers a strong isolation boundary, often favored in highly regulated environments.

- Daytona: Initially focused on development environments, Daytona pivoted to AI agent infrastructure in early 2025. They claim impressive sub-90ms sandbox creation times using Docker containers, prioritizing speed and agility. Their background in developer environments likely gives them an advantage in crafting highly usable and responsive interfaces for agents.

- Modal: Modal targets GPU-heavy Python workloads, leveraging serverless infrastructure optimized for machine learning tasks. Their platform is designed for developers needing scalable compute for training or inference, particularly when GPUs are involved, a niche not directly addressed by Cloudflare’s initial Sandbox offering which focuses on CPU-intensive agent logic.

- Vercel: Vercel, a prominent player in front-end development and serverless functions, also launched its own Firecracker-based Sandbox in beta. This move signals a broader recognition among cloud providers of the critical need for specialized agent infrastructure. Vercel’s strength lies in its developer-centric approach and integration with popular web frameworks, which could extend to agent-powered applications.

Cloudflare’s differentiation lies in its global edge network and the aforementioned two-tier architecture. While competitors offer robust isolation (Firecracker microVMs) or rapid provisioning (Docker), Cloudflare’s ability to distribute these environments globally and offer a spectrum of compute options—from lightweight V8 isolates to full Linux containers—positions it uniquely. This allows Cloudflare to cater to a wider array of AI agent use cases, from simple API calls handled by Workers to complex coding and development tasks within Sandboxes, all with the benefits of low latency and high availability inherent to its edge infrastructure.

Real-World Adoption: The Figma Case Study

A testament to the readiness and robustness of Cloudflare’s Sandboxes is its adoption by Figma for production agent workloads. Alex Mullans, who leads AI and Developer Platforms at Figma, highlighted their use case in the announcement, stating, "Figma Make is built to help builders and makers of all backgrounds go from idea to production, faster. To deliver on that goal, we needed an infrastructure solution that could provide reliable, highly-scalable sandboxes where we could run untrusted agent- and user-authored code." This endorsement from a major design and collaboration platform underscores the critical need for secure, scalable, and reliable environments for running untrusted code, a core capability of AI agents. Figma’s use case likely involves agents assisting users in design processes, automating tasks, or even generating creative content, all of which demand a secure sandbox to execute potentially untrusted code generated by AI or users.

Pricing Model: Efficiency at Scale

Cloudflare has introduced an innovative active CPU pricing model for Sandboxes, directly addressing concerns about cost-efficiency. Instead of charging for provisioned but unused resources, this model bills only for the CPU cycles actually consumed. CPU time is priced at a highly competitive $0.00002 per vCPU-second. This pay-for-what-you-use approach is particularly beneficial for AI agent workloads, which often exhibit unpredictable and bursty consumption patterns, allowing developers to optimize costs significantly. The standard plan accommodates a substantial scale, supporting up to 15,000 concurrent "lite" instances, 6,000 "basic" instances, and over 1,000 larger instances, providing ample capacity for a wide range of applications from individual developers to large enterprises. The SDK is currently at version 0.8.9, and comprehensive documentation is now readily available, facilitating rapid developer adoption.

Broader Implications for AI Development

The general availability of Cloudflare Sandboxes and Containers carries significant implications for the broader AI development landscape. Firstly, it democratizes access to sophisticated, stateful execution environments, lowering the barrier to entry for developers looking to build complex AI agents. The robust security features, particularly the zero-trust credential injection, will instill greater confidence in enterprises considering deploying AI agents that interact with sensitive internal systems or proprietary data. The optimized developer experience, with features like PTY support and persistent interpreters, will accelerate iteration cycles and foster innovation in agent design.

Furthermore, Cloudflare’s unique edge-native, two-tier architecture positions it as a critical infrastructure provider for the next generation of AI applications. As AI agents become more ubiquitous and their interactions require lower latency, the advantage of edge distribution will become increasingly pronounced. The ability to seamlessly switch between lightweight Workers and full Sandboxes provides unparalleled flexibility, allowing developers to tailor their infrastructure precisely to the demands of each agent task, thereby optimizing both performance and cost. This strategic offering from Cloudflare is poised to significantly impact how AI agents are developed, deployed, and scaled across various industries, pushing the boundaries of what autonomous software can achieve.

In conclusion, Cloudflare’s general availability of Sandboxes and Cloudflare Containers represents a significant leap forward in providing the foundational infrastructure necessary for the burgeoning field of AI agents. By combining robust security, enhanced developer experience, optimized performance, and a unique edge-distributed architecture with a cost-efficient pricing model, Cloudflare is well-positioned to become a cornerstone for developers building the intelligent, autonomous systems of tomorrow. The move solidifies Cloudflare’s strategic expansion beyond its traditional CDN and security offerings into the heart of modern cloud computing and artificial intelligence.