Securing Autonomous AI Agents on Kubernetes: A Comprehensive Framework for Trust and Control in Production Environments

The early hours of the morning often bring the most critical system alerts, and for many IT operations teams, 2 AM can be synonymous with chaos. Imagine a scenario where three hundred alerts simultaneously flood a company’s network, database, application, and security domains. The on-call engineer, roused from sleep, embarks on a frantic, multi-hour investigation. This typically involves firing up half a dozen dashboards, painstakingly correlating timestamps, formulating and testing hypotheses with log data, and often repeating the cycle until a root cause is finally pinpointed. In a recent incident, this arduous process revealed a firewall rule change that cascaded into application timeouts, ultimately exhausting database connection pools—a discovery made three hours into the crisis. This perennial "2 AM problem" underscores the inherent inefficiencies and human toll of manual incident response in increasingly complex digital infrastructures.

Modern enterprise environments, characterized by sprawling microservices architectures, hybrid cloud deployments, and continuous delivery pipelines, have made manual diagnostics an unsustainable practice. The sheer volume and velocity of operational data—logs, metrics, traces, and security events—overwhelm human operators. It is against this backdrop that autonomous AI agents are emerging as a transformative solution, promising to automate the entire triage process, from initial alert correlation to root cause analysis. Such an agent would seamlessly pull network telemetry, query application logs, examine database metrics, and cross-reference security events, all while authenticating with various systems and dynamically determining additional data sources to query based on its evolving understanding of the incident. Critically, these actions must occur within a production Kubernetes cluster, presenting a unique confluence of operational efficiency and profound security challenges.

Unlike traditional microservices or batch jobs with predictable dependencies and resource consumption, an AI agent’s operational footprint is inherently dynamic and non-deterministic. Its dependency graph, credential requirements, and execution path shift in real-time based on the incident being investigated. This fundamental difference necessitates a re-evaluation of established Kubernetes security models. This article explores the infrastructure patterns developed to operate autonomous diagnostic agent systems on Kubernetes, focusing on isolation, robust secrets containment, a graduated trust framework, and specialized observability for a workload category that fundamentally challenges conventional security assumptions.

The Paradigm Shift: Why AI Agents Break Traditional Kubernetes Security

Most existing Kubernetes security models are predicated on the assumption that workloads possess clearly defined dependency sets, interact with a static list of external services, and consume resources predictably. Consequently, developing Role-Based Access Control (RBAC) roles, network policies, and resource limits typically involves establishing and enforcing these established boundaries. However, autonomous AI agents violate every one of these core assumptions, introducing a new layer of complexity and risk that traditional approaches are ill-equipped to handle.

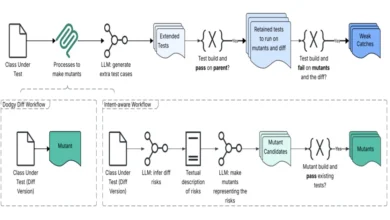

Firstly, AI Agents Create Dynamic External Dependencies. An autonomous AI agent does not operate with a fixed set of API calls. Its runtime behavior involves dynamically determining which data sources to query based on generated hypotheses. For instance, a simple investigation might only require contacting a log aggregation service. In contrast, a more complex incident could necessitate chaining data from network telemetry, application performance metrics, security event logs, and a topology graph. This dynamic nature renders it impossible to pre-define comprehensive network policies, as the agent’s needs are entirely emergent.

Secondly, AI Agents Require Multiple Domain Credentials. A cross-domain diagnostic agent, by its very nature, requires access to a diverse array of systems. This includes credentials for network monitoring tools, application performance management (APM) systems, log aggregation services, security event streams, topology services, and Large Language Model (LLM) inference APIs. Storing such a multitude of secrets within a single container significantly expands its potential blast radius. A compromise of this single container could grant an attacker access to a substantial portion of the operational stack.

Thirdly, AI Agents Have Unpredictable Resource Utilization. The resource demands of an AI agent can fluctuate wildly. A diagnostic agent investigating a minor connectivity problem might consume only 200 megabytes of RAM and complete its task in under two minutes. Conversely, a cascading failure spanning multiple infrastructure domains could demand 4 gigabytes of RAM to process hundreds of thousands of log entries, taking up to fifteen minutes. This fluid resource usage makes establishing static, predefined resource limits impractical, potentially leading to resource starvation or excessive over-provisioning.

Finally, AI Agents Have Nondeterministic Execution Flows. The execution path of an AI agent is driven by iterative reasoning: developing hypotheses, retrieving evidence, evaluating, and then refining or rejecting those hypotheses. This means that two investigations starting with identical problem statements can diverge dramatically in their execution, entirely dependent on the data revealed at each step. This non-deterministic behavior makes it impossible to define a "normal" or baseline execution pattern, which is crucial for traditional anomaly detection systems. Deploying an autonomous agent with the same security assumptions as a standard service often exposes these weak points not during design review, but during the very first, messy production incident.

The Kubernetes Job Pattern: Isolation as a Foundational Security Principle

A critical insight in securing these complex workloads has been treating each agent investigation as a separate Kubernetes Job, rather than a long-running Deployment. This approach, diverging from typical microservice deployments, offers significant advantages by default: resource isolation, failure isolation, clean state, and an investigation-scoped audit trail.

Initially, a standard Deployment with a replica count was used. However, this quickly proved problematic. A single investigation consuming excessive memory could impact all other investigations within the same pod. A pathological reasoning loop could monopolize shared resources, while a timed-out investigation awaiting an LLM API response could necessitate recovery for the entire engine process. Running each investigation as a distinct Kubernetes Job provided four crucial benefits:

- Resource Isolation: Each investigation receives its own container with dedicated CPU and memory allocations. This prevents a resource-intensive investigation from starving simpler ones. Resource limits can be tailored to the expected complexity of each job.

- Failure Isolation: If a job fails due due to an out-of-memory error or an API timeout, only that specific investigation is affected. Other ongoing investigations remain undisturbed, and Kubernetes automatically records the failure.

- Clean State: Every job starts from a fresh container image, eliminating the risk of state leakage between investigations. This ensures no stale contexts, accumulated memory fragmentation, or leftover temporary files persist.

- Audit Trail by Default: Each job generates its own isolated log stream, complete with start and end timestamps and resource consumption metrics. This dedicated logging greatly simplifies debugging, allowing operators to pull logs for a specific investigation without sifting through interleaved output from a shared process.

The orchestration flow typically involves a control plane receiving an incident, creating a unique investigation_id, and then dynamically generating a Kubernetes Job specification. This Job is submitted to the cluster, executes the diagnostic agent, and upon completion, its results are stored, and the Job itself is cleaned up. The overhead of creating a new Job (typically 2-5 seconds for scheduling and container startup) is negligible compared to the overall investigation time, which can range from ninety seconds to fifteen minutes. The profound advantages of a Job’s isolation far outweigh this minimal startup cost. The Job boundary becomes the fundamental unit of scheduling, timeout, retry logic, auditability, and cleanup, allowing for more granular control and resilience.

Secrets Management: Containing the Blast Radius in a Multi-Domain World

The challenge of secrets management for autonomous AI agents qualitatively differs from traditional microservices due to the inherently wider blast radius of a compromised container. A cross-domain diagnostic agent, by design, needs access to LLM inference API keys, log aggregation credentials, network monitoring authentication tokens, cloud storage access keys, and potentially topology graph database and security event stream credentials. In such systems, a single agent container can span four or five distinct infrastructure domains. A compromise of this container grants an attacker extensive access across the operational stack.

To mitigate this critical risk, robust secrets management, typically implemented with tools like HashiCorp Vault, becomes indispensable. The key strategies employed include:

- Dynamic, Short-Lived Credentials: Agent Jobs authenticate with Vault at startup using their Kubernetes service account token. They receive credentials specifically scoped to the investigation’s duration. Once the job completes, these credentials automatically become invalid. This significantly reduces the window of exploitation for a compromised container, aligning with Zero Trust principles.

- Distinct Secret Paths per Domain: Implementing separate access paths for each domain (e.g.,

/agent/data/network-monitoring,/agent/data/log-aggregation,/agent/data/llm/inference) ensures granular control and distinct audit trails. This separation allows for clear tracking of when and by which investigation specific domain credentials were accessed. - No Secrets in Git or Environment Variables at Rest: Leveraging a Vault agent injector automates the authentication, retrieval, injection, and revocation of secrets. This means that no sensitive information is ever stored in Git repositories or persisted as environment variables, preventing compromise even if container images or Git history are accessed.

- Rotating Credentials Without Redeployment: If an LLM API key or a monitoring service token needs to be rotated, the update is made directly in Vault. The next time an agent Job starts, it automatically picks up the new credentials, eliminating the need for Helm upgrades, ArgoCD syncs, or Deployment rolling restarts. This operational agility is crucial when managing credentials for numerous external services.

While the debate between unique Vault identities per investigation versus a single agent identity with per-domain policies exists, the latter was initially chosen for its manageable operational complexity. However, as noted in "What We Would Do Differently," the forensic value of per-investigation identities, for enhanced attribution, is a strong argument for embracing the additional automation complexity.

/filters:no_upscale()/articles/securing-autonomous-ai-agents-kubernetes/en/resources/210figure-1-1777378846859.jpg)

The Four-Phase Trust Model: A Graduated Access Framework

Granting an autonomous agent production credentials from day one is a non-starter for most organizations, regardless of the agent’s perceived capability. Operational teams require an incremental approach to build confidence. A four-phase trust model provides a clear, observable path to gradually elevate an agent’s privileges, moving from zero trust to fully autonomous operation. This model mirrors best practices for introducing any high-autonomy system into a critical operational environment.

- Phase 1: Shadow Mode: The agent operates on production data, but its output is purely observational. Diagnostic results are reviewed by a human after the fact and compared against known outcomes. The agent has strictly read-only access to data sources and cannot initiate any actions. The primary question for this phase is: Does the agent produce useful and accurate results?

- Phase 2: Read-Only Assist: The agent functions as a recommendation engine for operational teams. During an incident, it runs automatically, presenting its hypothesis tree and evidence chain to the on-call engineer. The engineer can then accept, reject, or modify the agent’s diagnosis. The agent still lacks any write privileges. This phase asks: Will operational teams trust the agent’s reasoning?

- Phase 3: Limited Remediation: In this phase, the agent is permitted to execute low-risk remediation actions (e.g., restarting a pod), but only upon explicit operator approval. The agent’s write privileges are tightly scoped by specific Kubernetes RBAC roles and limited to specific API operations on designated resources. The core question here is: Can the agent take safe and effective actions?

- Phase 4: Autonomous L1: The agent autonomously resolves routine incidents without human intervention. Escalation occurs only if the agent’s confidence level falls below a defined threshold or if it requests remediation outside its approved scope. All agent activity is meticulously logged for audit purposes. This final phase asks: Can we rely on the agent to manage critical incidents independently?

Transitions between these phases are strictly gated by operational evidence and predefined metrics, rather than arbitrary timelines. For example, moving from Phase 1 to 2 might require over 95% diagnostic accuracy across 100 shadow incidents. Phase 2 to 3 could demand over 90% operator agreement on diagnosis and an 80% acceptance rate of agent recommendations with minimal edits. The ultimate promotion to Phase 4 often requires over 99% successful completion of remediated actions and a rollback rate of less than 1%, with sign-off from both operations and platform leads. Crucially, a robust framework also includes demotion criteria, allowing for automatic rollback to a previous phase if safety or performance metrics degrade.

The infrastructural implication is that Kubernetes RBAC, Vault policies, and network policies must be parameterized per phase. The same agent codebase runs across all phases, but its permissions vary dramatically. This is where GitOps proves invaluable: all permission changes are version-controlled, auditable, and subject to rigorous review processes within Git.

Observability for Non-Deterministic Workloads

Standard observability approaches, typically focused on request-level metrics and predictable service behavior, fall short for autonomous agent workloads. The fundamental unit of work for an AI agent is an iterative hypothesis-evaluation-refinement cycle, demanding a specialized monitoring strategy. While Prometheus, Grafana, and Loki form a standard cloud-native observability stack, the dashboards and metrics developed for AI agents are fundamentally different.

Key observability metrics include:

- Investigation-Level Metrics: Instead of request-level metrics, focus is on investigations initiated, completed, and failed. Metrics like average time to complete an investigation (as a function of domain complexity) and the number of hypothesis evaluation cycles per investigation are crucial. P99 latency is less relevant given the wide duration variance of investigations.

- LLM API Consumption as a First-Class Operational Metric: The agent’s reasoning directly depends on external LLM inference APIs, making the LLM provider’s rate limits and latency part of the critical path. Tracking the total number of LLM API calls per investigation, average call time, tokens consumed per call, error rates, and cost per call provides early warnings of pathological investigations.

- Investigation-Level Cost Attribution: The total cost of an investigation comprises LLM inference, Kubernetes compute, retrieval and tooling, and storage/egress. While a simple investigation might cost mere cents (e.g., 15-40 cents for 15k-25k input tokens), a complex multi-domain investigation could range from $1.50 to $4.00 for LLM inference alone, potentially reaching $2-6 when Kubernetes compute is included. With fifty investigations per day, monthly costs can range from $3,000 to $9,000, with the top 10% of complex investigations often accounting for over 60% of total spending. Without per-investigation attribution, these costs remain hidden within aggregated cloud bills.

- Reasoning Depth as a Health Signal: Tracking the number of hypothesis-evaluation iterations for each investigation helps detect if an agent is stuck in a reasoning loop. Implementing "circuit breakers" (e.g., escalating after 8 cycles for simple investigations or 15 for multi-domain ones) prevents excessive token consumption in low-probability searches.

- Per-Job Log Isolation: Isolating logs for each Job allows for precise debugging. When an investigation produces incorrect results, its specific logs reveal the exact reasoning chain, providing both a method for debugging operational issues and auditing the logic behind a result.

This dual focus on operational health and reasoning quality is paramount for deploying autonomous agents in production.

Deployment Pipeline: GitOps for Configuration-Driven Risk Management

/filters:no_upscale()/articles/securing-autonomous-ai-agents-kubernetes/en/resources/158figure-2-1777378846859.jpg)

For autonomous agent workloads, GitOps transcends being merely a best practice; it becomes a critical safety control because the primary risk often resides in configuration. When the trust phase, RBAC scope, Vault policy, network egress rules, and resource limits vary across development, staging, and production environments, the organization no longer manages a single deployment state but a matrix of security states. Four trust phases across three environments can yield twelve distinct permission configurations, each demanding version control, reviewability, and auditability.

For instance, promoting an agent from shadow mode to limited remediation in production is not a code deployment but a Helm values change and a Vault policy update, rigorously reviewed through a pull request. In this model, drift detection is no longer a convenience but a required safety mechanism. If an administrator were to broaden an agent’s RBAC policy during troubleshooting and forget to revert it, a GitOps tool like ArgoCD should automatically detect and reconcile this drift. For an agent-based workload, configuration drift is synonymous with blast-radius drift, making automated reconciliation essential for maintaining a consistent security posture.

Lessons Learned and Refining the Approach

Reflecting on the implementation, several improvements stand out for future iterations. Firstly, implementing Vault identities per investigation from day one would be a priority. While initially considered more operationally complex, the forensic value of precisely attributing credentials to specific investigations for post-incident analysis far outweighs the management overhead, which can be mitigated with automation.

Secondly, building investigation-level cost attribution earlier would provide immediate benefits. The significant variance in per-investigation costs, particularly from LLM inference, means that operations teams can quickly identify which incident categories are cost-effective to automate and which should remain human-triaged, aligning with FinOps principles for AI workloads.

Finally, integrating the four-phased trust model into the infrastructure at the onset is crucial, rather than retrofitting it. This means defining phase-specific Helm values for each environment from the start, recognizing that promotion between trust phases is a distinct process from promotion between environments.

Conclusion: Navigating the Autonomous Future

Autonomous AI agents are not a distant concept; they are rapidly integrating into Kubernetes clusters, demanding a proactive evolution of existing security models. Organizations deploying these agents often begin with basic patterns—a Deployment, environment variables for API keys, and a hope for the best. However, bridging the gap between this baseline and a production-quality security posture requires a deliberate application of cloud-native building blocks: Kubernetes Jobs for robust isolation, HashiCorp Vault for dynamic secrets management, granular RBAC for access control, GitOps for auditability and drift detection, and specialized observability tools focused on non-standard, AI-centric metrics.

The more significant hurdle, however, is organizational rather than purely technical. Granting an autonomous agent access to production resources necessitates a robust trust framework that allows operations teams to incrementally build confidence. While the four-phase model provides a structured approach, the specifics are less important than establishing an explicit, observable progression. The critical first step for any organization is to audit current Kubernetes security assumptions against the unique properties of AI agents: dynamic dependencies, multi-domain credentials, unpredictable resource utilization, and non-deterministic execution. Identifying and addressing these gaps, then deploying agents initially in shadow mode, allows data-driven decisions to govern when and how trust is extended, paving the way for a secure and efficient autonomous operational future.