Confluent Revolutionizes Kafka Schema Management by Decoupling Schema IDs from Message Payloads

Confluent has introduced a groundbreaking new approach to managing schema metadata within Apache Kafka, enabling schema IDs to be stored in message headers rather than directly embedded within the message payload. This pivotal update is meticulously designed to significantly simplify data governance, empower organizations to adopt robust schema validation practices without necessitating sweeping changes to existing event formats, and enhance the overall agility of event-driven architectures. The feature leverages Kafka’s native header support, a capability often underutilized for such critical metadata, and seamlessly integrates with the widely adopted Confluent Schema Registry, a cornerstone for enterprises navigating the complexities of modern event streaming across microservices, analytics pipelines, and diverse data platforms.

The Evolving Landscape of Event-Driven Architectures and Data Governance

Apache Kafka, originally developed at LinkedIn and open-sourced in 2011, has evolved into the de facto standard for real-time data streaming, forming the backbone of countless modern data infrastructures. Its unparalleled ability to handle high-throughput, low-latency data streams has fueled the proliferation of event-driven architectures (EDAs), microservices, and real-time analytics across industries. However, this proliferation, while driving innovation, has also introduced significant challenges, particularly in managing the "data contracts" or schemas that define the structure and meaning of events flowing through these systems.

As organizations scale their use of Kafka, moving from a handful of topics to thousands, and involving dozens or hundreds of independent teams, the complexity of managing schema evolution becomes a critical bottleneck. Confluent, founded in 2014 by the creators of Kafka, recognized this early, introducing the Confluent Schema Registry. The Schema Registry provides a centralized, distributed storage layer for schemas, enabling producers to register schemas and consumers to retrieve them, thereby ensuring data compatibility and preventing deserialization errors. It became an indispensable tool for enterprises aiming to standardize, validate, and evolve their event data gracefully.

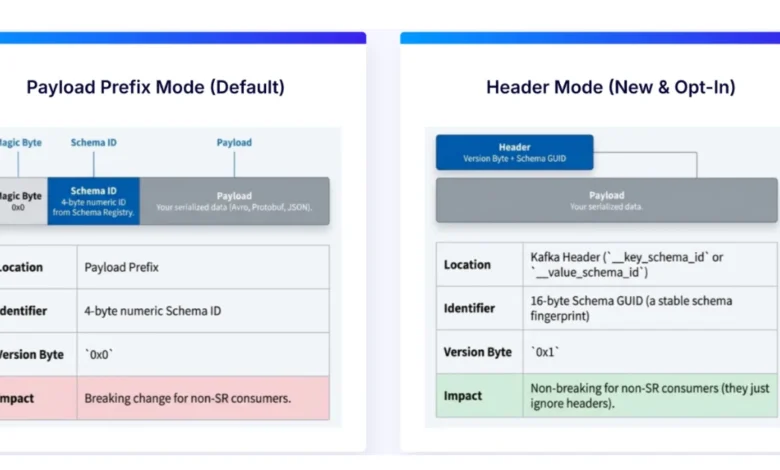

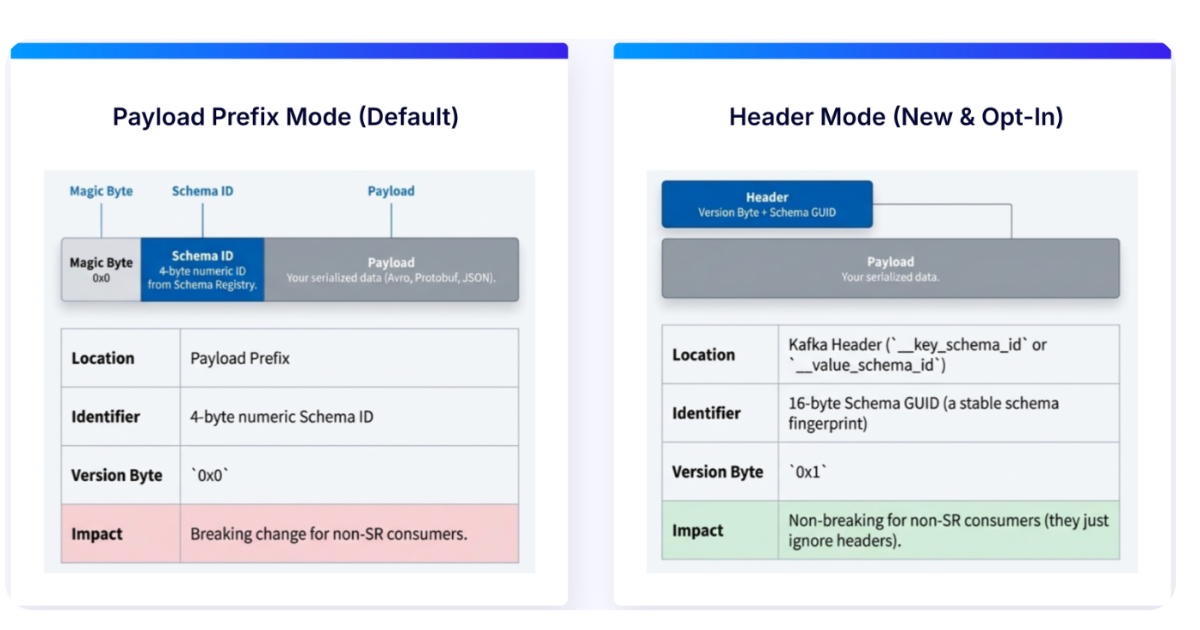

The Traditional Bottleneck: Schema IDs Embedded in Payloads

In traditional Kafka deployments leveraging Confluent’s widely used wire format, the schema ID—a unique identifier pointing to a specific schema version in the Schema Registry—was embedded directly at the beginning of the message payload. This mechanism, while effective in ensuring consumers could correctly deserialize events by fetching the corresponding schema, created a tight coupling between the schema metadata and the data itself.

This tight coupling, over time, presented several significant complications:

- Schema Evolution Complexity: When schema changes were introduced, even minor ones, the necessity to modify the payload format meant that all producers and consumers interacting with that specific event stream had to be updated and redeployed in a coordinated manner. This "stop-the-world" scenario often led to substantial coordination overhead, delayed deployments, and increased risk of breaking downstream systems.

- Rigidity in Data Formats: The embedded ID implied a specific wire format, which, while beneficial for Confluent’s ecosystem, could sometimes pose challenges for integrating with systems that expected pure Avro, Protobuf, or JSON Schema payloads without proprietary prefixes.

- Interoperability Hurdles: Downstream systems like data warehouses, data lakes, or machine learning platforms that consume Kafka data often prefer clean, self-contained payloads. The presence of schema IDs within the payload could necessitate extra parsing logic or pre-processing steps, adding complexity and potential performance overhead.

- Increased Coordination Overhead: In large, decentralized organizations adopting principles like Data Mesh, where domain teams own their data products, the tight coupling mandated extensive communication and synchronization between independent teams responsible for producing and consuming data, slowing down development cycles.

A Paradigm Shift: Decoupling with Kafka Headers

Confluent’s new approach fundamentally alters this dynamic by relocating schema identifiers from the message payload to Kafka record headers. Kafka headers, a feature available since Kafka 0.11.0, are essentially key-value pairs that can be attached to a Kafka record alongside its key, value, and timestamp. They are designed for auxiliary metadata that is not part of the core data payload but is crucial for message processing.

With this innovation, the message payload itself remains pristine and unchanged, containing only the actual business data in its pure Avro, Protobuf, or JSON Schema format. Consumers, upon receiving a message, first inspect its headers to retrieve the schema ID. Using this ID, they then fetch the corresponding schema from the Confluent Schema Registry at runtime. This process ensures that deserialization proceeds correctly while eliminating the tight coupling that characterized the previous method.

This architectural shift brings a cascade of benefits:

- Unchanged Payloads: The core data payload remains exactly as defined by the schema (e.g., pure Avro), making it inherently more portable and universally compatible.

- Reduced Dependence on Wire Formats: While Confluent’s client libraries still manage the header injection and retrieval, the internal payload format is now cleaner, reducing reliance on specific wire format implementations.

- Enhanced Flexibility: Schema resolution is now entirely decoupled from the payload’s content, making event streams significantly more flexible and easier to integrate across diverse downstream systems and tooling without proprietary parsing requirements.

- Support for Diverse Formats: The approach maintains full compatibility with industry-standard serialization formats like Avro, Protocol Buffers (Protobuf), and JSON Schema, ensuring broad applicability.

Expert Endorsements and Strategic Implications

The announcement has garnered significant attention from industry experts and Confluent leadership, highlighting the profound impact of this change.

Patrick Neff, CSTA Team Lead CEMEA at Confluent, emphasized the foundational role of schemas in his LinkedIn post, stating: "Schemas are the key enabler for unlocking the full value of your data." This underscores the broader strategic importance of robust schema governance in transforming raw data into actionable insights across streaming and analytics systems. By simplifying schema management, Confluent is directly addressing a core challenge in realizing the full potential of data assets.

Gunnar Morling, Technologist at Confluent, celebrated the technical elegance and practical benefits of the new feature in his LinkedIn post, describing it as "a massive quality of life improvement: payloads become valid, self-contained." This speaks directly to the improved developer experience and the operational efficiencies gained by having cleaner, more portable data structures. Developers can focus more on business logic and less on the intricacies of metadata management.

David Araujo, Director of Product Management at Confluent, further elaborated on the pragmatic adoption pathways, noting, "By moving schema IDs to headers, you can attach schemas to existing data in Kafka without touching payload formats." This highlights a crucial aspect: the feature supports incremental adoption, allowing organizations to introduce schema governance practices gradually without demanding large-scale, coordinated rewrites of existing producers and consumers. Schema IDs can be retroactively attached to existing event streams, enabling teams to slowly adopt stricter schema management while preserving backward compatibility.

Broader Impact and Analysis of Implications

The implications of this move extend across several critical dimensions of modern data architecture and operations:

1. Data Governance and Compliance:

Decoupling schema IDs empowers stronger, more agile data governance. By centralizing schema validation in the Schema Registry and making schema metadata easily accessible via headers, organizations can enforce data quality standards more effectively. This facilitates easier auditing of data contracts, improved data lineage tracking, and better support for regulatory compliance requirements (e.g., GDPR, CCPA) by ensuring transparent and well-defined data structures. The ability to evolve schemas independently reduces the risk of compliance breaches due to unmanaged data changes.

2. Enhanced Developer Experience and Productivity:

For developers, this change translates into significant "quality of life" improvements. The burden of coordinating schema changes across distributed teams is substantially reduced. Producers can evolve their data structures with greater autonomy, and consumers can adapt without requiring intimate knowledge of the producer’s serialization specifics beyond the schema ID. This fosters faster development cycles, reduces friction, and allows engineering teams to allocate more resources to core business innovation rather than infrastructure coordination.

3. Improved Interoperability with Downstream Systems:

Many downstream processing frameworks, analytics platforms, and machine learning systems prefer or even require pure, self-contained data formats. For instance, tools like Apache Flink, Spark, Presto, or various data warehousing solutions can now consume Kafka events with standard Avro, Protobuf, or JSON payloads directly, without needing to strip out Confluent-specific metadata. This simplification enhances data interoperability, streamlines data ingestion, and enables more consistent reuse of structured event data across diverse analytical and operational pipelines. It also makes Kafka data more amenable to being stored directly in object storage (like S3 or ADLS) as "pure" files, simplifying subsequent processing.

4. Scalability and Operational Efficiency:

At scale, the operational overhead of managing tight coupling can be immense. Decoupling reduces the blast radius of schema changes, making large-scale schema evolution a more manageable task. It supports the principles of Data Mesh, where independent domain teams own and manage their data products, by providing a robust mechanism for data contracts without imposing rigid cross-team dependencies. This leads to more resilient systems and greater operational efficiency.

5. Strategic Positioning for Confluent:

This innovation solidifies Confluent’s leadership in the event streaming space. By continuously addressing critical pain points for enterprise customers, Confluent reinforces the value proposition of its platform and its Schema Registry. It makes Confluent Cloud and Confluent Platform even more attractive for organizations looking to build robust, scalable, and governed event-driven architectures, providing a clear competitive advantage by simplifying a complex aspect of distributed data management.

Adoption Pathways and Future Outlook

While the benefits are substantial, the transition to this new header-based approach will naturally involve some considerations. Organizations may need to update their Kafka connectors and downstream tools that currently assume schema metadata is embedded within payloads. This suggests a transitional period where both approaches may coexist, depending on the readiness and update cycles of various components within the broader Kafka ecosystem.

The feature is currently available in Confluent Cloud, Confluent’s fully managed streaming service, ensuring that cloud customers can immediately leverage these advancements. It is also anticipated to be integrated into Confluent Platform, the self-managed version, with comprehensive Schema Registry support under existing licensing models, making it accessible to a wider array of enterprise deployments.

In conclusion, Confluent’s decision to move schema IDs to Kafka message headers represents more than just a technical tweak; it is a strategic architectural evolution that addresses a fundamental challenge in managing distributed data. By decoupling schema metadata from the data payload, Confluent is empowering organizations to build more flexible, scalable, and governable event-driven systems, accelerating innovation and unlocking the full potential of their real-time data assets. This development marks a significant stride towards simplifying the complexities of modern data architectures, paving the way for a more agile and interconnected data landscape.