Cloudflare Unveils Reference Architecture for Secure and Scalable Model Context Protocol Deployments Amid Rising AI Agent Security Concerns

Cloudflare has recently unveiled a comprehensive reference architecture designed to facilitate the secure and scalable deployment of Model Context Protocol (MCP) systems across enterprise environments. This strategic move positions centralized governance, robust remote server infrastructure, and stringent cost controls as indispensable prerequisites for the successful implementation of production-ready AI agent systems. The announcement arrives at a critical juncture, coinciding with intensified industry scrutiny over the security posture of MCP-based systems, a segment of AI technology rapidly gaining traction in the enterprise landscape.

The Emergence of AI Agents and the Critical Role of MCP

The rapid evolution of artificial intelligence has led to the proliferation of AI agents, sophisticated software entities capable of autonomously performing tasks, interacting with external tools, and processing information to achieve specific goals. These agents represent a paradigm shift in how enterprises leverage AI, moving beyond static models to dynamic systems that can interpret, reason, and act. From automating customer service interactions to orchestrating complex data analysis workflows, AI agents promise unprecedented levels of efficiency and innovation.

At the heart of enabling these autonomous capabilities lies the Model Context Protocol (MCP). MCP is an open standard specifically engineered to connect AI agents to the vast array of external tools, APIs, and data sources that define modern enterprise operations. It acts as an essential intermediary, allowing AI models, which are inherently data-driven and logic-focused, to interact with the real world – whether that means fetching up-to-date information from a CRM, initiating transactions in an ERP system, or manipulating files in a cloud storage service.

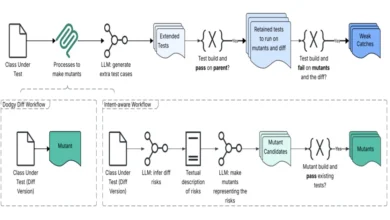

The architecture of MCP is distinct: it separates the agent-facing client, which handles the AI model’s requests, from backend servers responsible for interfacing with corporate resources. This abstraction is crucial; it allows agents to autonomously retrieve data and perform actions without the underlying AI model needing direct, low-level access to every corporate system. This design promotes modularity and flexibility, enabling AI agents to adapt to new tools and data sources with relative ease. However, this very abstraction, while beneficial for functionality, also introduces new trust boundaries and expanded attack surfaces between AI models, the tools they interact with, and sensitive enterprise systems.

The timeline of AI agent development has seen a rapid acceleration, particularly in the last few years. Initial prototypes focused on task automation within controlled environments. As large language models (LLMs) became more capable, the vision for truly autonomous agents grew clearer. The need for a standardized protocol to manage these interactions became evident, leading to the development and adoption of initiatives like MCP. This progression from experimental concepts to enterprise-grade solutions underscores the urgency for robust architectural frameworks, especially as organizations begin to integrate these agents into mission-critical operations.

Unpacking the Security Imperative: Vulnerabilities in Agent Systems

The enthusiasm surrounding AI agents and MCP has been tempered by a growing awareness of their inherent security vulnerabilities. Recent research and academic analyses have cast a stark light on the significant risks associated with MCP-based systems, prompting organizations to re-evaluate their deployment strategies. These risks are not merely theoretical; they encompass a range of attack vectors that could lead to substantial data breaches, operational disruptions, and reputational damage.

Among the most prominent threats is prompt injection, a sophisticated form of attack where malicious instructions are subtly embedded within user inputs or data processed by the AI agent. These injected prompts can override or manipulate the agent’s intended behavior, forcing it to perform unauthorized actions, reveal sensitive information, or even propagate further attacks. Given the autonomous nature of AI agents, a successful prompt injection can have far-reaching consequences, as the agent might inadvertently execute harmful commands across multiple connected systems.

Supply chain attacks also pose a significant danger to MCP deployments. Just as traditional software supply chains can be compromised, the complex ecosystem of tools, APIs, and data sources that AI agents interact with presents numerous points of vulnerability. If an external tool integrated with an MCP system is compromised, malicious code or data could be introduced, allowing attackers to gain unauthorized access to corporate resources or manipulate agent behavior. The interconnectedness enabled by MCP means that a weakness in one component can cascade across the entire system.

The issue of exposed or misconfigured servers is another critical concern. MCP backend servers, which bridge AI agents to corporate resources, often hold sensitive credentials or access points. If these servers are not properly secured, attackers can exploit misconfigurations, default credentials, or unpatched vulnerabilities to gain unauthorized access. Such compromises can directly lead to arbitrary code execution within the enterprise network, allowing attackers to run their own commands, install malware, or establish persistent backdoors.

Moreover, the expanded attack surface inherent in MCP’s architecture makes data exfiltration a heightened risk. A single, well-crafted malicious prompt, or a compromised backend tool, can trigger a chain of actions across multiple systems, potentially enabling an attacker to extract sensitive corporate data. Researchers have already demonstrated proof-of-concept attacks that achieve arbitrary code execution and data exfiltration across various MCP integrations, underscoring the severity of these vulnerabilities.

Academic analysis further suggests that these risks are not solely attributable to implementation flaws or oversight. Instead, many stem from fundamental, protocol-level design choices within MCP itself, which can inadvertently amplify the success rates of attacks in complex agent-tool systems. This insight highlights that merely patching individual vulnerabilities might not be sufficient; a more architectural and systemic approach to security is required. The inherent complexity of managing trust boundaries between diverse models, tools, and sensitive systems, coupled with the potential for a single prompt to initiate a cascade of actions, necessitates a robust security framework that goes beyond traditional IT security paradigms.

Cloudflare’s Comprehensive Solution: A Multi-faceted Approach

In response to these escalating security concerns and the imperative for enterprises to deploy AI agents safely, Cloudflare’s reference architecture offers a multi-faceted approach centered on security, control, and efficiency. The company’s strategy aims to mitigate the inherent risks of MCP deployments by fundamentally rethinking how these systems are architected and managed.

Centralized Governance and Remote Infrastructure:

Cloudflare strongly advocates for a departure from locally deployed MCP servers, which it identifies as a significant security liability. These local deployments often suffer from a lack of centralized oversight, relying on unvetted or inconsistently managed software configurations. Such environments are prone to security gaps, making them attractive targets for attackers.

In stark contrast, Cloudflare has adopted a model where MCP servers are deployed remotely on its robust developer platform. This remote deployment is coupled with centralized management by a dedicated team. This approach offers several critical advantages: it ensures consistent security policies, standardized configurations, regular patching, and continuous monitoring, all managed by experts. By externalizing the infrastructure and centralizing its governance, enterprises can significantly reduce their operational burden and bolster their security posture against the varied threats facing AI agent systems. This also ensures that the underlying infrastructure benefits from Cloudflare’s global network security capabilities, providing layers of defense against DDoS attacks, bot threats, and other web-based vulnerabilities.

Robust Authentication and Access Control:

A cornerstone of Cloudflare’s architecture is its comprehensive authentication and access control mechanism, built upon Cloudflare Access. This powerful tool integrates seamlessly with enterprise single sign-on (SSO) solutions, multi-factor authentication (MFA), and a range of contextual signals. These signals can include device posture (e.g., whether a device is compliant with security policies) and geographical location, providing a highly granular and adaptive layer of security.

For users and administrators, MCP server portals provide a unified, intuitive interface for discovering and accessing authorized servers. Beyond mere access, these portals empower administrators to enforce critical security policies. This includes implementing data loss prevention (DLP) rules, which prevent sensitive information from being exfiltrated or misused by AI agents. Furthermore, the system allows for fine-grained tool exposure, meaning administrators can precisely control which specific tools and functionalities an AI agent can access, minimizing the attack surface and adhering to the principle of least privilege. This level of control is vital in preventing agents from interacting with unauthorized systems or performing actions beyond their intended scope.

Optimizing Resource Management and Cost Controls:

Beyond security, Cloudflare’s architecture also addresses the practical considerations of cost and resource management inherent in large-scale AI deployments. The introduction of an "AI Gateway" plays a pivotal role here. Positioned strategically between MCP clients and the underlying language models (LLMs), this gateway acts as an intelligent traffic controller.

The AI Gateway enables organizations to dynamically route requests across different model providers, offering flexibility and resilience. More importantly, it enforces strict usage limits and meticulously monitors token consumption at a granular, per-user level. This capability is crucial for managing operational costs, which can quickly escalate with extensive LLM interactions. By providing detailed insights into token usage, enterprises can optimize their model choices, allocate resources more efficiently, and prevent unexpected expenditures. This level of visibility and control transforms potential cost sinks into manageable, predictable operational expenses.

Introducing "Code Mode" for Enhanced Efficiency and Security:

Recognizing the growing complexity of defining and managing MCP tool definitions, Cloudflare has introduced "Code Mode." Traditional approaches often involve exposing every single API operation of a tool to the AI model, which can lead to a bloated context window, increased token usage, and a larger attack surface.

Code Mode innovates by collapsing these extensive tool interfaces into a small, manageable set of dynamic entry points. This allows AI models to discover and invoke tools on demand, fetching only the necessary information and operations when they are explicitly required. Cloudflare reports that this ingenious approach can reduce token usage by an astounding 99.9%. Such a dramatic reduction not only mitigates context window limitations, making AI agents more efficient and capable of handling complex tasks, but also implicitly enhances security by reducing the amount of information about internal systems and operations that the model is exposed to at any given time. This leaner interaction model minimizes opportunities for prompt injection and other forms of manipulation.

Industry Perspectives and the Evolving AI Governance Landscape

While Cloudflare’s architectural controls directly address immediate concerns around security and cost, industry analysts offer a broader perspective on the role of protocols like MCP within the overarching architecture of agent systems. Forrester, a leading research and advisory firm, emphasizes a critical distinction: protocols such as MCP are often mistakenly perceived as comprehensive governance layers. In reality, they function more as transport or interoperability mechanisms, akin to Remote Procedure Call (RPC) systems or messaging queues, rather than sophisticated policy engines.

This distinction holds significant implications as enterprises increasingly move towards implementing centralized control layers for their AI agent deployments. Recent research, also highlighted by Forrester, suggests that crucial aspects like governance, observability, and policy enforcement are emerging as a separate "control plane" concern within modern agent architectures. This control plane is envisioned as sitting conceptually above both the tool integration and orchestration layers, providing a holistic framework for managing AI agent behavior and interactions.

In this context, Cloudflare’s approach—with its emphasis on centralized management, robust authentication via Cloudflare Access, and granular policy enforcement through MCP server portals—can be seen as a vital component of this wider movement toward externalizing control. It represents a practical manifestation of how an organization can build out elements of an agent control plane, providing necessary infrastructure and services that enable secure and governed agent operations. However, it also underscores that while Cloudflare offers powerful tools for secure MCP deployment, the ultimate responsibility for comprehensive AI governance, policy definition, and ethical deployment resides with the enterprise. The protocol facilitates communication, but the control plane dictates behavior and ensures compliance.

Broader Implications for Enterprise AI Adoption

Cloudflare’s initiative marks a significant step forward in the journey toward mainstream enterprise adoption of AI agent systems. It acknowledges that for these powerful, autonomous technologies to move beyond experimental phases into production-critical roles, they must be underpinned by robust security, governance, and cost-management frameworks.

The shift towards production-ready AI agent systems necessitates a departure from ad-hoc deployments. Enterprises are increasingly realizing that simply integrating an LLM with a few tools is insufficient. A holistic approach is required, one that considers the entire lifecycle of an AI agent, from its initial deployment to its ongoing operation, monitoring, and eventual decommissioning. This includes rigorous security audits, continuous vulnerability management, and clear lines of responsibility for agent behavior.

The critical role of robust security and governance frameworks cannot be overstated. As AI agents gain more autonomy and access to sensitive data and systems, the potential for misuse, errors, or malicious exploitation escalates. Solutions like Cloudflare’s provide essential building blocks for mitigating these risks, offering enterprises a blueprint for establishing secure perimeters, controlling access, and monitoring agent activities. This helps in building trust in AI systems, which is paramount for their widespread acceptance and integration into core business processes.

Furthermore, the need for a holistic approach extends beyond security to encompass operational efficiency and cost-effectiveness. The ‘AI Gateway’ and ‘Code Mode’ functionalities exemplify how thoughtful architectural design can simultaneously enhance security (by reducing attack surface and context bloat) and optimize resource utilization. This integrated thinking is crucial for making AI agent deployments economically viable and sustainable at scale.

Looking ahead, the landscape of AI agent standards and security protocols is in continuous evolution. As more enterprises adopt these technologies, the industry will likely see further refinements to protocols like MCP, as well as the emergence of new standards and best practices for agent governance. Cloudflare’s contribution provides a timely and practical example of how a major infrastructure provider is addressing these challenges, paving the way for a more secure, efficient, and controlled future for autonomous AI in the enterprise. The conversation around AI security is no longer solely about protecting data at rest or in transit, but about securing intelligent, autonomous entities that interact dynamically with the digital world.