AI in Wedding Planning: A Double-Edged Sword for Modern Couples

The landscape of wedding planning is undergoing a significant transformation, with Artificial Intelligence (AI) emerging as a surprisingly prevalent tool for couples navigating the intricate path to their big day. Data from The Knot’s Real Weddings Study indicates that over one-third of engaged couples are now leveraging AI in some capacity to streamline their wedding preparations. This technological integration, while offering potential efficiencies, also presents a nuanced picture of its effectiveness, particularly when applied to the foundational and often most critical decision: selecting a venue.

For many couples, the venue represents the cornerstone of their wedding. Its availability dictates the wedding date, influences vendor choices through potential restrictions, and typically constitutes the largest single expenditure in the wedding budget, often rivaled only by catering and beverages. The urgency to secure a suitable venue is amplified by the rapid pace at which desirable locations are booked. In the current wedding market, popular venues can have waiting lists extending several years, with some couples finding their preferred dates unavailable for as long as 2028, underscoring the critical need for early and decisive action.

One couple’s personal experience highlights the volatile nature of venue bookings. After having already secured some vendors, their meticulously chosen venue unexpectedly cancelled all event bookings, including their wedding, due to financial difficulties. This abrupt cancellation necessitated a frantic search for an alternative, transforming an exciting planning phase into a stressful ordeal. Finding a new venue that not only aligned with their budget and aesthetic preferences but was also available on their pre-booked dates presented a formidable challenge, even with a significant portion of their planning already completed.

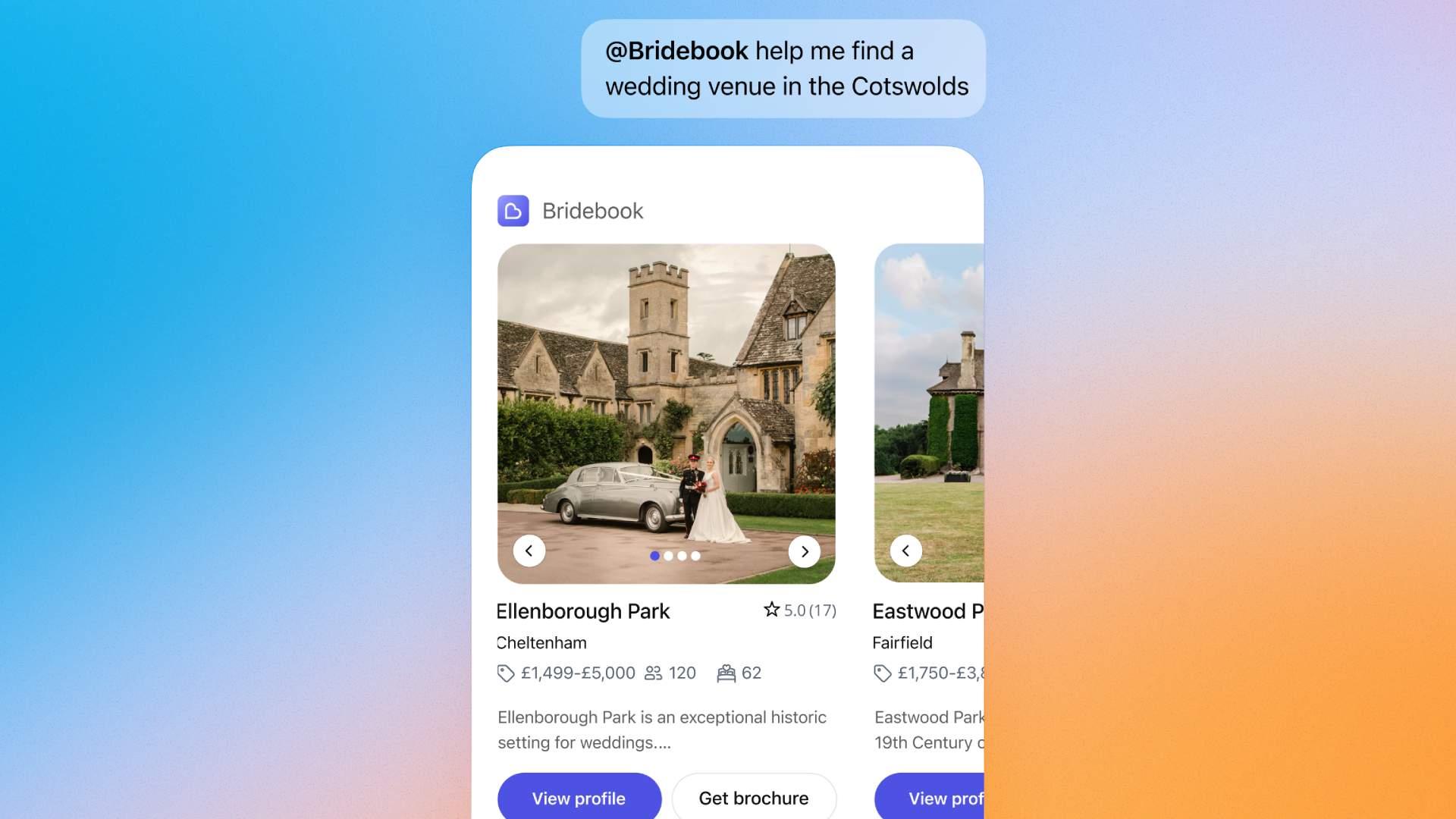

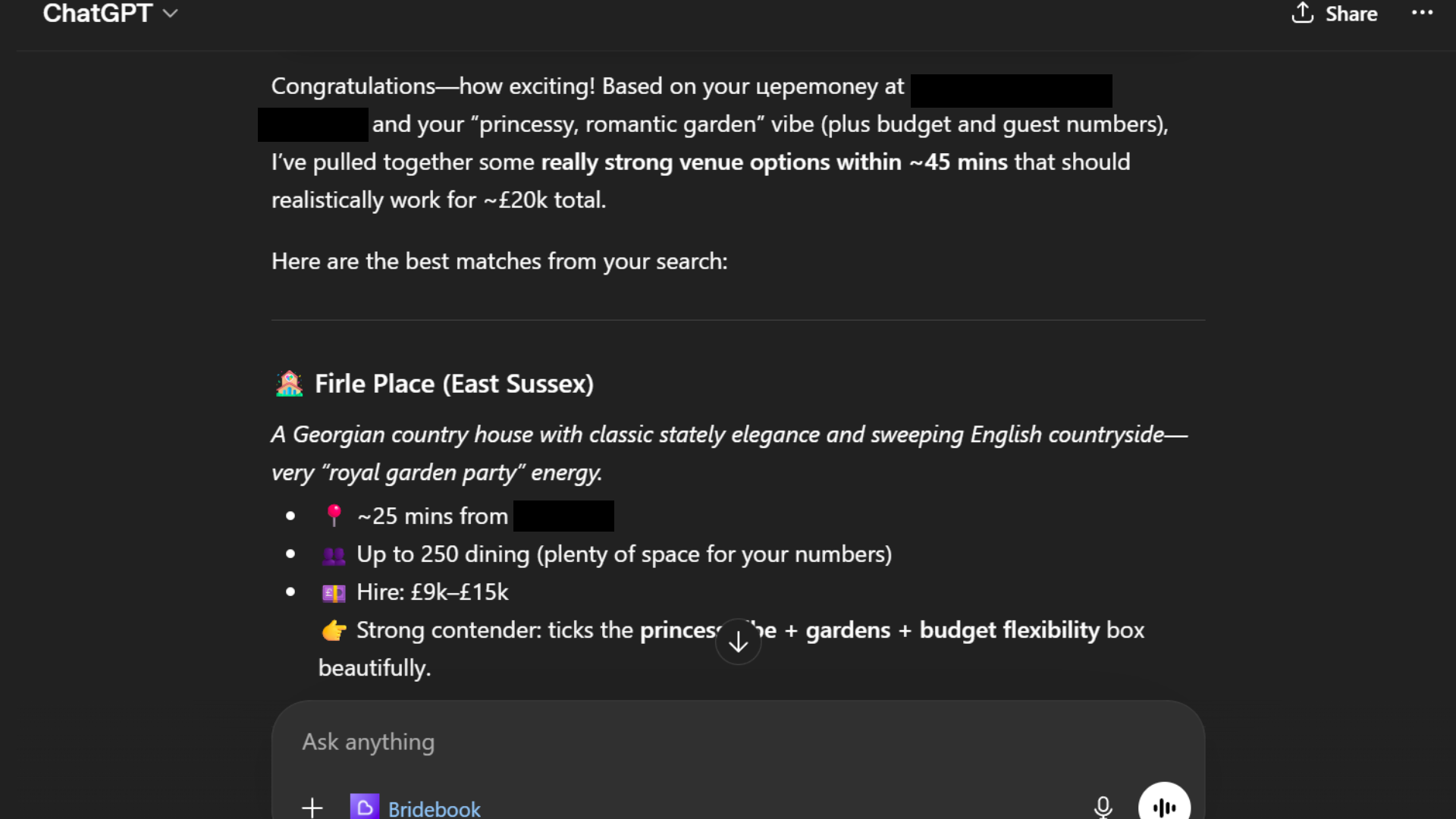

In an effort to assess AI’s potential to mitigate such crises, a test was conducted using Bridebook’s integrated AI venue finder, powered by ChatGPT. The prompt was designed to mirror the couple’s specific requirements: a summer wedding within the next two years, a reception venue within a 45-minute drive of their church ceremony, a "princessy" ambiance with beautiful outdoor spaces, capacity for 90 guests for dinner and 130 for the evening, a preference for low or no corkage fees, and a total budget ceiling of £20,000 for venue, food, and drink.

The AI’s initial response presented a carousel of venue options with brief descriptions, followed by a bullet-point breakdown of top recommendations. While the AI successfully incorporated the budget constraint and generally suggested venues within a reasonable driving distance of the church, several critical shortcomings were identified. Firstly, the AI failed to provide crucial information regarding venue availability, a key factor in wedding planning. Furthermore, pricing estimates were often presented as broad ranges, failing to account for the premium often associated with peak season weddings, such as those held in the summer.

A more significant concern arose when comparing the AI’s pricing data with actual quotes obtained during the couple’s venue-hunting experiences. It became apparent that the venue costs displayed by Bridebook, and consequently utilized by the AI, were not consistently updated. Many venues appear to keep their precise pricing details hidden within brochures or require a formal inquiry, making it difficult for couples to obtain accurate cost estimates early in the process. This discrepancy between AI-provided figures and real-world quotations highlights a potential for misinformation and subsequent disappointment for users relying solely on the AI’s output.

Adding to the evaluation, the AI-generated suggestions did not include the venue the couple ultimately selected, suggesting that AI’s current capabilities might not always capture the unique serendipity or specific nuances that lead couples to their perfect venue.

Despite these limitations, the AI did offer some valuable supplementary advice. It provided a "budget reality check," which served to temper expectations by highlighting the higher average costs prevalent in their specific geographic area. Additionally, it offered suggestions for broadening their search parameters, such as exploring country homes and walled gardens alongside traditional castles, to achieve the desired "princess vibe." These insights, while general, demonstrate AI’s capacity to offer broader strategic guidance beyond specific venue recommendations.

Ultimately, the author concluded that the AI’s utility in this particular scenario did not surpass that of direct website browsing on platforms like Bridebook or conducting a general search on Google. In some respects, the AI proved less effective due to its more restrictive output compared to the wider array of possibilities that a conventional search engine might surface.

The Challenges of Venue Selection: A Deep Dive

The process of selecting a wedding venue is a multi-faceted undertaking fraught with potential pitfalls. Beyond the immediate financial considerations, couples must contend with a complex interplay of factors:

- Availability and Lead Times: As noted, venues often book years in advance. This necessitates a proactive approach and a willingness to be flexible with dates. The long lead times can be a source of stress, particularly for couples who envision a specific season or year for their wedding.

- Vendor Restrictions: Many venues impose limitations on external vendors, requiring couples to use a pre-approved list. While this can simplify some decisions, it may also restrict access to preferred caterers, florists, or other service providers, potentially impacting the couple’s ability to personalize their event.

- Aesthetic and Ambiance: Beyond practical considerations, the venue’s atmosphere plays a crucial role in setting the tone for the wedding. Couples often seek spaces that reflect their personalities and desired theme, whether it be rustic, modern, elegant, or whimsical. The "princessy vibe" requested in the AI prompt exemplifies this desire for a specific aesthetic.

- Logistical Considerations: Proximity to the ceremony site, accessibility for guests (including those with mobility issues), and on-site amenities such as accommodation or parking are all vital logistical elements that must be factored into the decision-making process.

- Hidden Costs: Beyond the advertised venue hire fee, couples must be aware of potential additional costs, such as service charges, corkage fees, mandatory security, or venue-specific decor requirements. Transparency in pricing is paramount to avoid budget overruns.

The Impact of AI on Venue Scouting

While AI tools like Bridebook’s ChatGPT integration offer a glimpse into the future of wedding planning, their current limitations warrant careful consideration. The reliance on potentially outdated or incomplete data sets can lead to inaccurate recommendations and wasted time. However, the broader implications of AI in this sector are undeniable. As AI technology matures, we can anticipate more sophisticated tools capable of:

- Real-time Availability Tracking: AI could potentially integrate directly with venue booking systems to provide instant availability information, eliminating a significant pain point for couples.

- Personalized Recommendations: Advanced AI could analyze a couple’s preferences, budget, and even their social media activity (with consent) to generate highly personalized venue suggestions that truly resonate.

- Virtual Tours and 3D Renderings: AI-powered platforms could offer immersive virtual tours of venues, allowing couples to explore spaces remotely and gain a more tangible sense of the environment.

- Budget Optimization and Negotiation Assistance: AI could assist couples in understanding market rates, identifying potential cost-saving opportunities, and even provide data-driven insights to aid in venue negotiation.

- Contingency Planning: In scenarios like the couple’s unfortunate venue cancellation, AI could theoretically assist in rapidly identifying suitable alternative venues based on redefined parameters, minimizing stress and disruption.

However, the human element in wedding planning remains irreplaceable. The emotional significance of choosing a venue, the serendipitous discoveries made during in-person visits, and the personal connections forged with venue coordinators are aspects that AI cannot fully replicate. The nuanced understanding of a couple’s vision, the intangible "feel" of a space, and the ability to adapt to unforeseen circumstances often require human intuition and experience.

Practical Advice for Venue Hunting: A Human Touch

Drawing from the experiences of couples who have navigated the venue selection process, several key pieces of advice emerge:

- Establish a Dedicated Wedding Email Address: Centralizing all venue and supplier communications in a separate inbox significantly reduces clutter and streamlines information retrieval. This shared resource ensures both partners are kept abreast of all developments.

- Strategize Venue Visits: Avoid visiting your absolute dream venue first. Use initial visits to less-favored locations as a learning experience. This allows couples to develop a critical eye for what truly matters, identify potential dealbreakers, and gain a clearer understanding of industry standards before evaluating their top choices.

- Maintain an Open Mind: Do not dismiss a venue too hastily based on a single perceived flaw. If a venue ticks several crucial boxes, consider visiting it. Sometimes, a venue that doesn’t meet every single criterion upon initial assessment can reveal unexpected strengths during an in-person tour, especially if circumstances require a broader search.

- Limit Initial Group Visits: While family input is valuable, initial venue visits are best undertaken by the couple alone. This allows for candid discussions and a clear understanding of their individual preferences without the potential for overwhelming opinions from a larger group. Subsequent visits with family can then serve to refine decisions.

- Gather Essential Details Prior to Visiting: Before committing to a site visit, endeavor to obtain key information regarding venue capacity, pricing structures, and any potential restrictions. This proactive approach prevents wasted time and ensures that visits are reserved for venues that genuinely align with the couple’s fundamental requirements.

In conclusion, while AI offers promising advancements in wedding planning, its current application in venue selection presents a mixed bag. The ability to process vast amounts of data and identify potential matches is valuable, but it cannot fully replace the due diligence, personal intuition, and on-the-ground experience that are crucial to making such a significant decision. As AI continues to evolve, its role in wedding planning will undoubtedly expand, but for now, a balanced approach, integrating technological assistance with traditional methods and invaluable human advice, remains the most effective strategy for couples embarking on their journey to the altar.