NorthStar to go public via SPAC to expand space-based SSA network

NorthStar Earth and Space, a Montreal-based pioneer in the field of space situational awareness (SSA), has officially announced its intention to go public through a definitive merger agreement with Viking Acquisition Corp. I, a special purpose acquisition company (SPAC) listed on the New York Stock Exchange. The transaction, announced on April 17, 2024, represents a strategic move to capitalize on the growing demand for orbital monitoring services as the space environment becomes increasingly congested with commercial constellations and debris. The merger values NorthStar at a pre-money valuation of $300 million and is expected to provide the capital necessary to scale its space-based sensor network from a handful of operational assets to a comprehensive constellation of nearly 100 sensors.

The deal is structured to ensure a minimum influx of capital regardless of shareholder redemptions, a common hurdle for SPAC transactions in recent years. While Viking Acquisition Corp. I held approximately $230 million in its trust account as of late December 2023, the merger includes a $30 million private investment in public equity (PIPE) anchored by Cartesian Capital Group. Cartesian Capital, a prominent U.S.-based private equity firm, previously led NorthStar’s Series C funding round in 2023, signaling continued institutional confidence in NorthStar’s long-term business model. Additional financial backing for the company has historically come from the governments of Quebec and Luxembourg, bringing NorthStar’s total capital raised to date to approximately $100 million prior to this merger.

Strategic Objectives and the SPAC Mechanism

The merger with Viking Acquisition Corp. I, which is sponsored by the New York financial advisory firm KingsRock Advisors, marks a significant milestone for NorthStar. Viking is led by CEO N. Håkan Wohlin, a veteran of the financial industry who previously served as the global head of debt origination, capital markets, and treasury solutions at Deutsche Bank. By merging with a shell company that is already publicly traded, NorthStar bypasses the traditional and often more time-consuming initial public offering (IPO) process, gaining "unprecedented access to capital," according to NorthStar founder and CEO Stewart Bain.

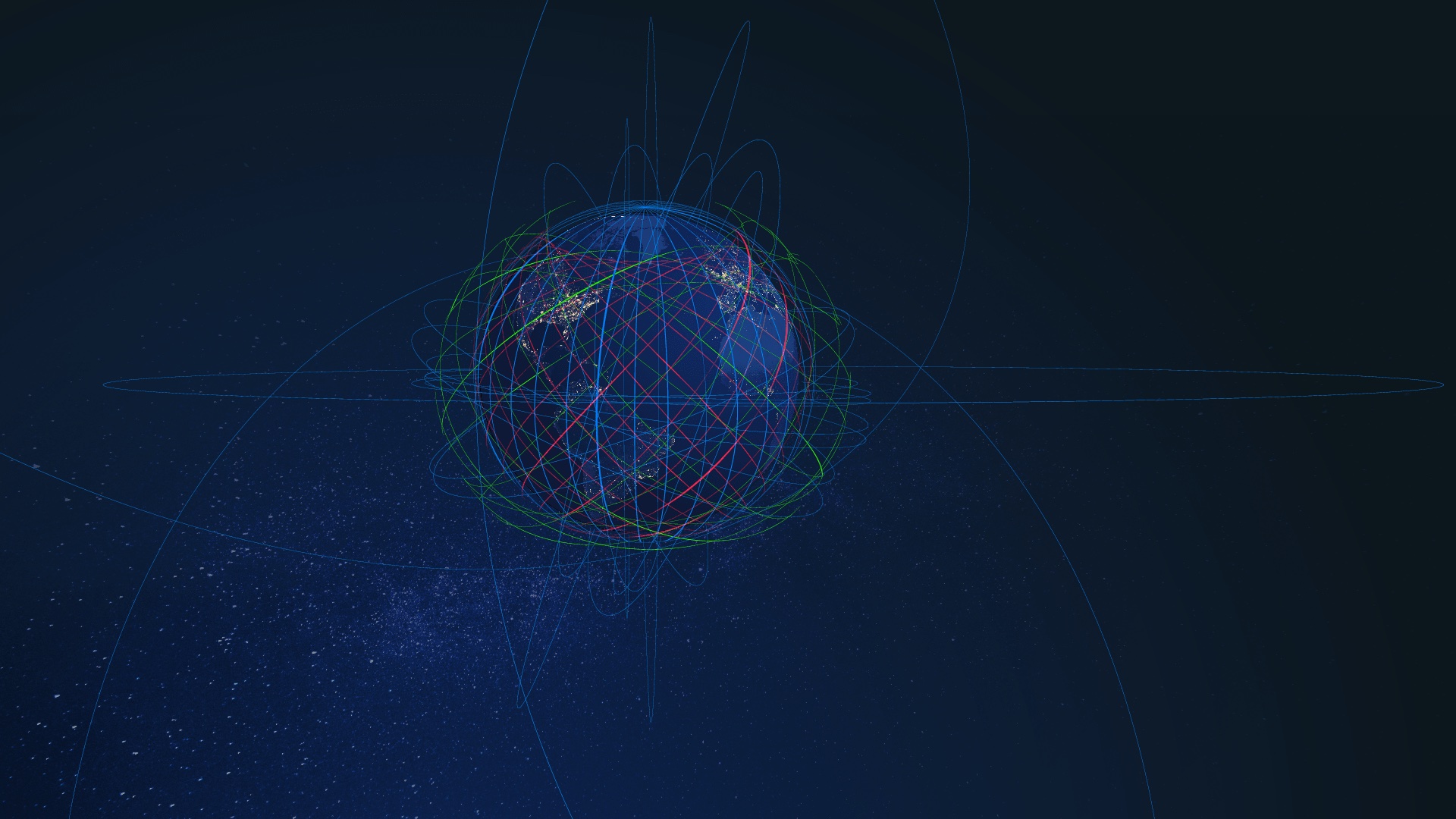

The capital raised through this transaction is earmarked for the expansion of NorthStar’s proprietary constellation. The company aims to address the "urgent need" for advanced SSA services as the frequency of satellite launches reaches historic highs. As thousands of new satellites from megaconstellations like SpaceX’s Starlink and Amazon’s Project Kuiper enter low Earth orbit (LEO), the risk of collisions and the accumulation of space debris have become primary concerns for insurers, commercial operators, and national defense agencies alike.

A Chronology of Operational Challenges and Resilience

NorthStar’s journey to orbit has been characterized by both technical ambition and significant external setbacks. The company’s original roadmap, established in 2020, involved a partnership with Thales Alenia Space to construct a fleet of large, sophisticated satellites. However, in a strategic pivot in 2022, NorthStar shifted toward a "space-as-a-service" model, contracting Spire Global to build and operate smaller 16U cubesats.

The timeline of NorthStar’s deployment illustrates the volatility of the modern launch industry:

- 2020: Selection of Thales Alenia Space for the initial satellite batch.

- 2022: Pivot to Spire Global for a more agile, cubesat-based approach.

- Early 2023: NorthStar’s primary launch plans were derailed by the bankruptcy of Virgin Orbit. The satellites were originally scheduled to fly on the "Start Me Up" mission from the United Kingdom.

- January 2024: Success was finally achieved when Rocket Lab’s Electron vehicle deployed four NorthStar satellites into orbit. This launch also served as a recovery test for Rocket Lab, further highlighting the interconnected nature of the NewSpace ecosystem.

- January 2026: An evidentiary hearing was held in an ongoing legal dispute between NorthStar and Spire Global regarding the performance and health of the initial satellite batch.

Despite the successful January 2024 launch, the relationship between NorthStar and Spire Global has soured into a legal battle. NorthStar has alleged in court filings that one of the four satellites was lost shortly after deployment and that the remaining three failed to produce images that met the standards required by their contract. The company has sought damages for breach of contract, willful misconduct, and fraudulent misrepresentation. Spire Global has denied all allegations.

Technical Capabilities and the Phased Deployment Roadmap

The core of NorthStar’s value proposition lies in its ability to provide high-cadence, high-resolution monitoring of objects in orbit. Unlike ground-based radar or telescopes, which can be limited by weather conditions and geographic positioning, space-based sensors offer a persistent and unobstructed view of the orbital environment.

NorthStar’s sensors are designed to track objects as small as five centimeters in LEO and 40 centimeters in geostationary orbit (GEO). In its recent investor presentation, the company disclosed that it currently manages approximately 80 million observations per day. This data is derived from a combination of its own operational satellites, third-party space-based data (including a partnership with Japan’s Axelspace), and ground-based sensor networks.

The company’s roadmap for the future is divided into several phases:

- Current Phase: Operating four satellites in orbit to provide baseline SSA services.

- Expansion Phase: Deploying more than five "bespoke sensors" to achieve a 120-minute revisit rate for all resident space objects. This phase is critical for establishing a competitive presence in the commercial SSA market.

- Full Constellation Phase: The long-term vision involves a network of more than 90 sensors. This density would allow NorthStar to achieve a 20-minute revisit rate, providing near-real-time monitoring of potential collision events or suspicious satellite maneuvers.

NorthStar estimates that this phased growth will drive significant revenue, projecting more than $30 million in annual revenue by 2026 as government and commercial contracts mature.

Market Context: The "Return of the Space SPAC"

The NorthStar-Viking merger arrives at a pivotal moment for space industry financing. Between 2020 and 2021, the sector saw a flurry of SPAC deals involving companies like Virgin Galactic, Astra, and Momentus. However, many of those companies struggled to meet aggressive revenue projections, leading to a period of investor skepticism and a cooling of the SPAC market.

Recent activity suggests a more disciplined resurgence of the "Space SPAC." Unlike the first wave, current deals appear to involve companies with more established operational footprints and clearer paths to revenue. The NorthStar deal is being watched closely alongside other major industry moves, including the potential public listing of Hawkeye 360 and persistent rumors regarding a public offering for SpaceX’s Starlink division.

Industry analysts suggest that the increasing importance of "space sustainability" is driving this renewed interest. As the United Nations and various national regulatory bodies move toward stricter guidelines for orbital debris mitigation, the demand for precise SSA data—what NorthStar provides—is transitioning from a luxury to a regulatory necessity for satellite operators.

Broader Impact and Global Implications

The implications of NorthStar going public extend beyond the financial markets. SSA is increasingly viewed through the lens of national security and environmental stewardship. The ability to distinguish between a natural orbital decay, a piece of debris, and a "neighbor" satellite performing a maneuver is essential for preventing international incidents in space.

Furthermore, NorthStar’s collaboration with the governments of Quebec and Luxembourg highlights the geopolitical dimension of SSA. Luxembourg, in particular, has positioned itself as a global hub for the space economy, and its support of NorthStar underscores the strategic value of having independent, commercial sources of orbital data that are not solely reliant on the U.S. Department of Defense’s Space Track network.

Stewart Bain emphasized this mission in his official statement, noting that safeguarding orbital environments is "critical to advancing the mission" of space sustainability. By providing a transparent, commercially available catalog of space objects, NorthStar aims to foster a more predictable and safe environment for the rapidly growing space economy.

The transaction is expected to close before the end of September 2024, pending approval from Viking shareholders and the fulfillment of standard regulatory requirements. If successful, NorthStar will join a select group of publicly traded pure-play SSA providers, offering investors a direct stake in the infrastructure required to manage the world’s busiest—and most hazardous—new frontier.