AWS and Anthropic Announce Claude Opus 4.7 Availability on Amazon Bedrock, Elevating AI Capabilities for Enterprise Workloads

Amazon Web Services (AWS) and Anthropic have announced the general availability of Claude Opus 4.7 on Amazon Bedrock, Anthropic’s most advanced and intelligent model to date. This integration marks a significant advancement in generative AI services, promising enhanced performance across critical enterprise functions, including complex coding tasks, long-running agent operations, and sophisticated professional workflows. The release, detailed in an official AWS News Blog post and officially updated on April 17, 2026, signifies a maturing landscape for AI deployment in production environments, emphasizing both raw power and robust enterprise-grade infrastructure.

Claude Opus 4.7: A Leap Forward in AI Performance

Claude Opus 4.7, powered by Amazon Bedrock’s next-generation inference engine, is designed to handle demanding production workloads with enhanced efficiency and reliability. Anthropic reports that this iteration of Opus delivers notable improvements across a spectrum of use cases. These include more sophisticated agentic coding, deeper knowledge work applications, advanced visual understanding capabilities, and superior performance in managing long-running tasks. The model is characterized by its improved ability to navigate ambiguity, its more thorough problem-solving approaches, and its heightened precision in adhering to complex instructions.

The underlying inference engine within Amazon Bedrock is a key enabler of these advancements. It features a newly developed scheduling and scaling logic that dynamically allocates compute capacity in response to incoming requests. This adaptive allocation is crucial for maintaining high availability, particularly for steady-state workloads that demand consistent performance. Simultaneously, it ensures that the infrastructure can accommodate rapid scaling needs, a common requirement for dynamic AI applications.

A critical aspect of this offering for enterprise adoption is Amazon Bedrock’s commitment to data privacy. The platform provides "zero operator access," meaning that customer prompts and the generated responses are never visible to Anthropic or AWS operators. This stringent privacy control is paramount for organizations handling sensitive data, fostering a secure environment for AI development and deployment.

The Bedrock Advantage: Enterprise-Grade Infrastructure and Security

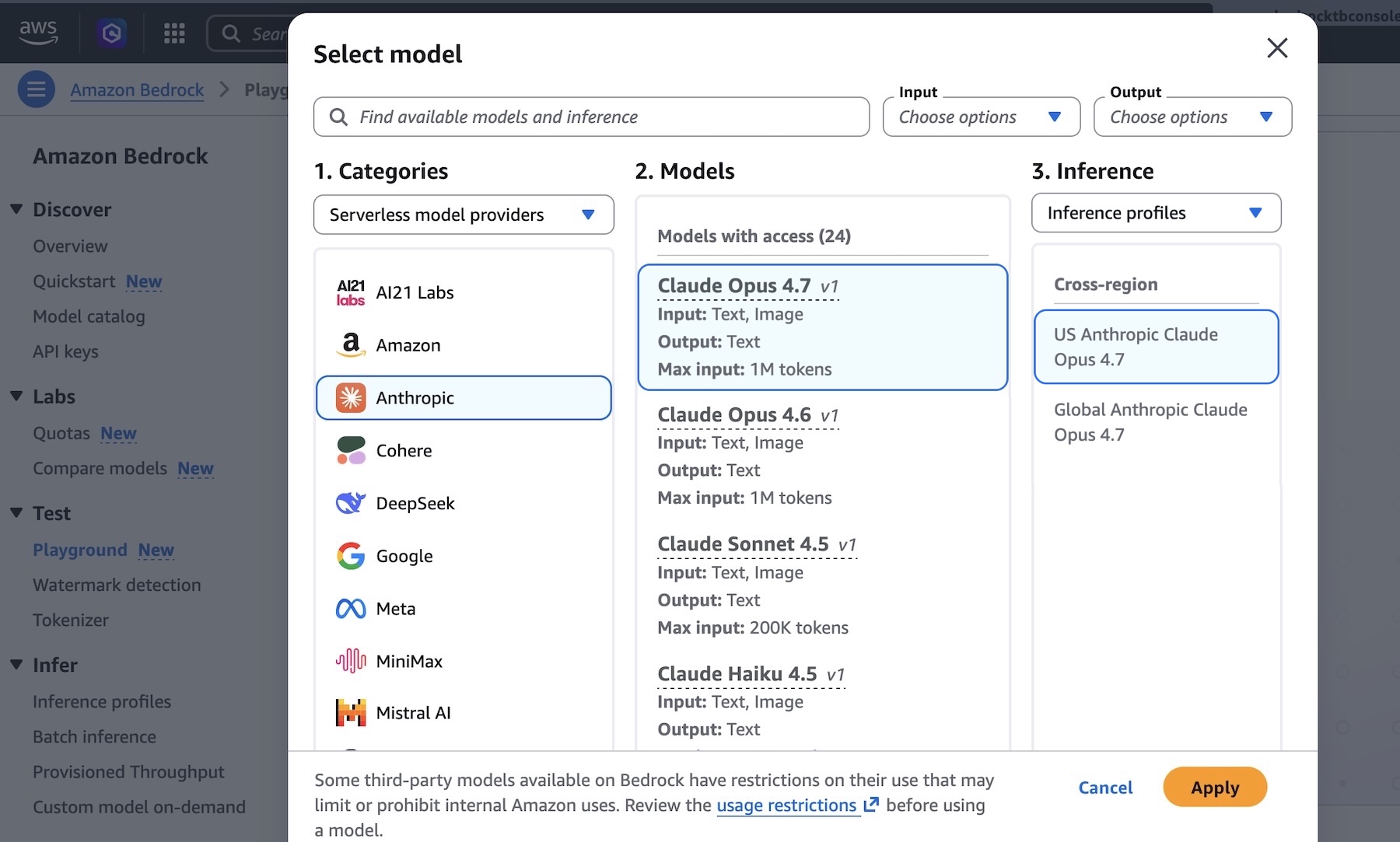

Amazon Bedrock serves as a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies, including Anthropic, AI21 Labs, Cohere, Meta, Stability AI, and Amazon itself. By integrating Claude Opus 4.7, Amazon Bedrock continues to expand its portfolio of leading AI models, providing customers with a unified interface to build and scale generative AI applications.

The Bedrock platform is built on AWS’s global infrastructure, ensuring scalability, reliability, and low latency. For Claude Opus 4.7, this translates to an enterprise-grade foundation that can support production-level deployments. The inference engine’s design prioritizes efficient resource utilization, allowing for cost-effective operations even with computationally intensive models.

The "zero operator access" policy is a cornerstone of Bedrock’s security posture. In an era where data privacy regulations are becoming increasingly stringent, this feature provides a significant competitive advantage for businesses considering AI adoption. It directly addresses concerns about proprietary information being exposed to third parties, making it a more attractive option for regulated industries such as finance, healthcare, and government.

Getting Started with Claude Opus 4.7 on Amazon Bedrock

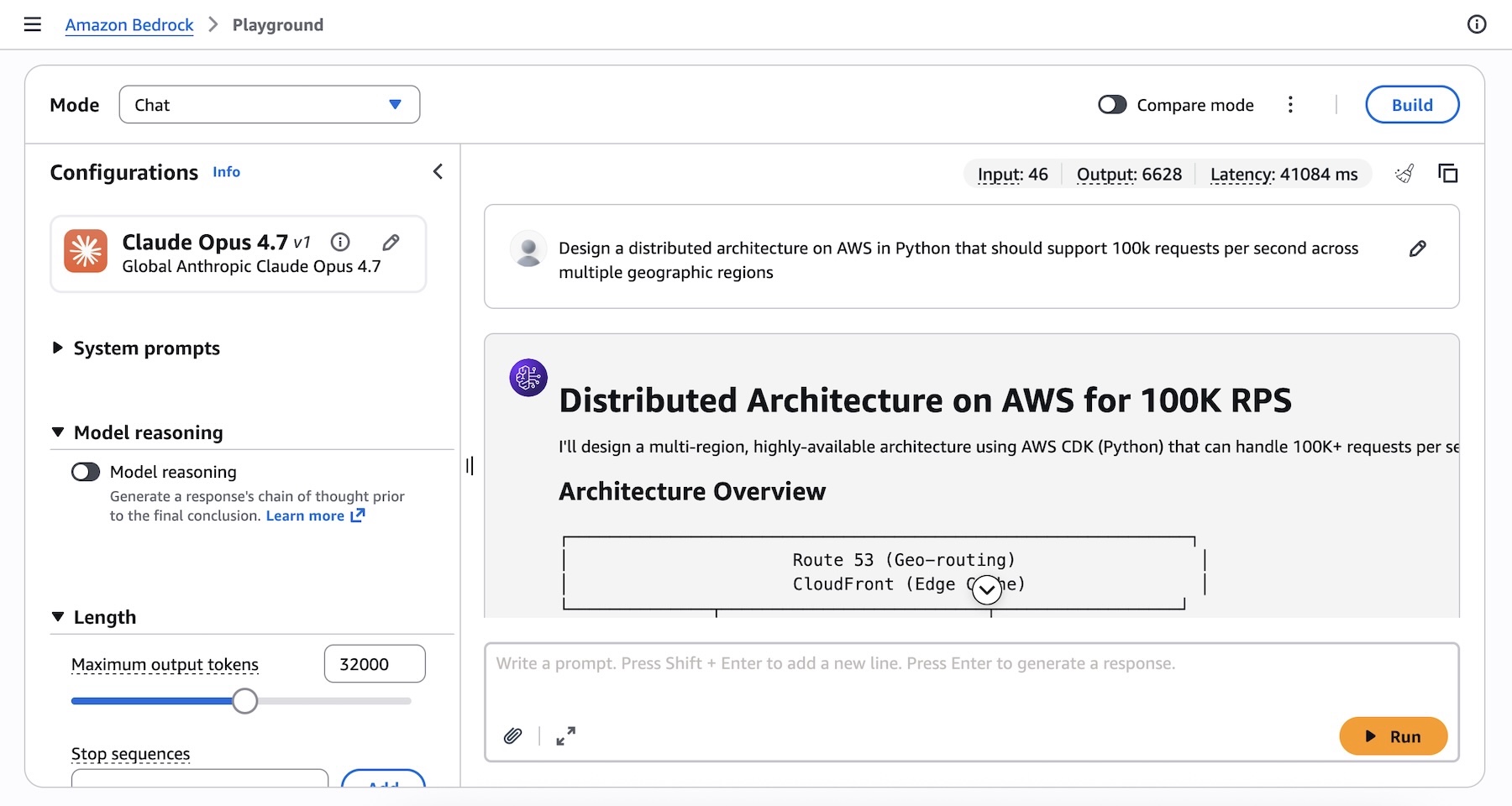

AWS has made it straightforward for developers and businesses to begin experimenting with and deploying Claude Opus 4.7. Users can access the model directly through the Amazon Bedrock console. By navigating to the "Playground" section under the "Test" menu and selecting "Claude Opus 4.7" from the model list, users can immediately begin interacting with the AI, testing complex prompts and evaluating its capabilities.

To illustrate the model’s power, an example prompt is provided: "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions." This type of complex, architectural design task is precisely where advanced models like Claude Opus 4.7 are expected to excel, showcasing their ability to generate detailed, actionable code and infrastructure blueprints.

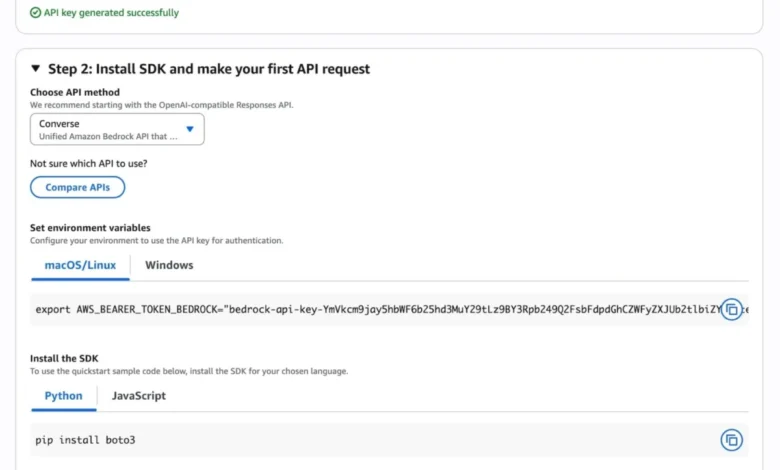

For programmatic access, Claude Opus 4.7 can be invoked via the Anthropic Messages API. This can be achieved through the bedrock-runtime client using either the Anthropic SDK or bedrock-mantle endpoints. Developers also retain the option to utilize the Invoke and Converse API calls on bedrock-runtime through the AWS Command Line Interface (AWS CLI) and AWS SDKs.

AWS provides comprehensive documentation and code examples to facilitate integration. The "Quickstart" feature in the Bedrock console offers a streamlined path to making the first API call, including guidance on generating short-term API keys for testing purposes. For those opting for OpenAI-compatible responses, sample code snippets are available to demonstrate inference requests.

The provided Python code snippet using the anthropic[bedrock] SDK package demonstrates a clear and concise way to interact with the model:

from anthropic import AnthropicBedrockMantle

# Initialize the Bedrock Mantle client (uses SigV4 auth automatically)

mantle_client = AnthropicBedrockMantle(aws_region="us-east-1")

# Create a message using the Messages API

message = mantle_client.messages.create(

model="us.anthropic.claude-opus-4-7",

max_tokens=32000,

messages=[

"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions"

]

)

print(message.content[0].text)Similarly, an AWS CLI command example is offered for direct invocation of the bedrock-runtime endpoint:

aws bedrock-runtime invoke-model

--model-id us.anthropic.claude-opus-4-7

--region us-east-1

--body '"anthropic_version":"bedrock-2023-05-31", "messages": ["role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."], "max_tokens": 32000'

--cli-binary-format raw-in-base64-out

invoke-model-output.txtThese examples highlight the accessibility and flexibility of integrating Claude Opus 4.7 into existing or new application workflows.

Enhanced Reasoning and Adaptive Thinking

A notable feature accompanying Claude Opus 4.7 is its support for "Adaptive Thinking." This capability allows the model to dynamically allocate its "thinking" token budget based on the complexity of each individual request. For intricate problems that require deeper analysis and exploration, the model can dedicate more computational resources, leading to more nuanced and accurate outputs. Conversely, for simpler queries, it can operate more efficiently, optimizing performance and cost. This intelligent resource management is a significant step towards more efficient and effective AI reasoning.

The availability of detailed documentation, including Anthropic’s prompting guide and specific API references for the Messages API and inference parameters, empowers users to fine-tune their interactions with the model. The article also notes that while Opus 4.7 is an upgrade from Opus 4.6, some prompt adjustments and "harness tweaks" may be necessary to fully leverage its enhanced capabilities.

Broader Implications for AI Adoption and Development

The integration of Claude Opus 4.7 on Amazon Bedrock signifies a broader trend in the AI industry: the increasing maturity of generative AI models and their transition from experimental tools to integral components of enterprise infrastructure. For businesses, this means a more accessible pathway to harnessing the power of advanced AI without the need for extensive in-house expertise in model training and deployment.

The focus on enterprise-grade features like zero operator access, robust inference engines, and seamless integration with cloud infrastructure addresses key adoption barriers. Organizations can now deploy sophisticated AI solutions with greater confidence in their security, scalability, and reliability.

The performance improvements reported by Anthropic across coding, knowledge work, and agentic tasks have direct implications for productivity. Developers can accelerate coding cycles, researchers can process and synthesize information more efficiently, and businesses can automate complex workflows that were previously manual or prohibitively expensive.

Regional Availability and Future Outlook

As of the announcement and update, Claude Opus 4.7 is available in several key AWS regions: US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). AWS consistently expands the availability of its services across its global network, and users are encouraged to consult the official AWS documentation for the most up-to-date list of supported regions and any future updates.

The availability of cutting-edge models like Claude Opus 4.7 on managed platforms like Amazon Bedrock democratizes access to advanced AI. It allows startups and established enterprises alike to leverage state-of-the-art technology, fostering innovation and competitive advantage.

The collaboration between AWS and Anthropic underscores the growing ecosystem of AI development and deployment. By providing a robust platform and a diverse selection of leading models, AWS is positioning itself as a central hub for generative AI innovation. Customers are encouraged to explore Claude Opus 4.7 within the Amazon Bedrock console and provide feedback through AWS re:Post or their usual AWS Support channels, contributing to the continuous improvement of these powerful AI tools.

The article was updated on April 17, 2026, to reflect corrections in code samples and CLI commands, ensuring accuracy and alignment with the latest model versions and APIs. This ongoing maintenance signifies a commitment to providing reliable and up-to-date resources for developers and users.