Apple Intelligence Integrates Real-Time Translation Features into iOS Messages to Bridge Global Communication Gaps

The integration of Apple Intelligence into the iOS ecosystem has introduced a suite of sophisticated tools designed to streamline user interaction, with one of the most significant updates being the implementation of real-time, bidirectional translation within the native Messages application. This development represents a shift from static translation apps toward a more fluid, integrated communication experience, allowing users to send and receive messages in multiple languages without leaving the conversation interface. By leveraging on-device machine learning and the neural engine capabilities of modern iPhone hardware, Apple has effectively turned its messaging platform into a cross-cultural communication hub, catering to a globalized workforce and an increasingly mobile population.

The introduction of this feature follows a broader trend in the technology industry where artificial intelligence is being moved from the periphery of the operating system to its core. For years, users relied on third-party applications or Apple’s standalone Translate app, launched in 2020 with iOS 14, to interpret foreign text. However, the new Apple Intelligence framework allows these processes to occur natively within the threads of daily conversation. This advancement is particularly relevant for travelers, expatriates, and language learners who require immediate linguistic support during active dialogues.

Hardware Requirements and the Apple Intelligence Framework

To utilize the real-time translation features within the Messages app, users must possess hardware capable of supporting Apple Intelligence. As of early 2025, this includes the iPhone 15 Pro and iPhone 15 Pro Max, as well as the entire iPhone 16 and iPhone 17 lineups. The technical threshold is dictated by the requirement for at least 8GB of RAM and the advanced neural processing units found in the A17 Pro, A18, and subsequent chipsets. Additionally, iPad models equipped with M-series chips (M1 through M4) and the latest iPad mini also support these features.

Apple Intelligence represents a fundamental redesign of how Siri and system-level tools interact with user data. Unlike traditional cloud-based AI, Apple’s implementation prioritizes "Private Cloud Compute," a system where the majority of processing occurs on the device itself. This ensures that sensitive personal conversations remain encrypted and inaccessible to the service provider. For the translation feature in Messages, this means that the linguistic analysis and text generation are performed by the local Neural Engine, providing a low-latency experience that functions even with limited internet connectivity, provided the necessary language packs have been downloaded.

Chronology of Apple’s Linguistic Evolution

The journey toward integrated real-time translation has been a multi-year endeavor for Apple. A timeline of these developments provides context for the current state of the technology:

- September 2017: Apple introduces the Neural Engine in the A11 Bionic chip, laying the hardware foundation for on-device machine learning.

- September 2020: The standalone Translate app debuts with iOS 14, offering text and voice translation for 11 initial languages.

- June 2021: Live Text is introduced in iOS 15, allowing users to translate text within photos and the Camera app.

- June 2024: Apple Intelligence is officially announced at the Worldwide Developers Conference (WWDC), promising deep integration of generative AI and linguistic tools across the OS.

- Late 2024 – Early 2025: Phased rollout of Apple Intelligence features, including the "Automatically Translate" toggle in Messages, begins for supported devices and regions.

Implementing Real-Time Translation in Messages

The activation of the translation feature is designed to be intuitive, though it is tucked away within contact-specific settings to allow for granular control. Users do not necessarily want every conversation translated; therefore, Apple allows the feature to be enabled on a per-thread basis.

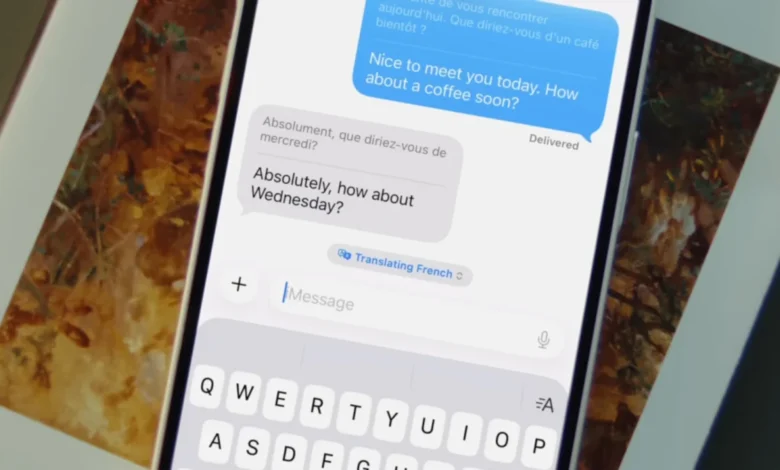

To enable the feature, a user must open a specific conversation thread in the Messages app and tap the contact’s name or icon at the top of the screen to access the conversation settings. Within this menu, a toggle labeled "Automatically Translate" appears. Once activated, the system prompts the user to select the primary language for the translation. At the time of the latest update, approximately 20 languages are supported, including major global dialects such as Mandarin Chinese, Spanish, French, German, Japanese, and Portuguese.

Once the feature is live, the interface changes dynamically. Every outgoing message sent by the user in their native tongue is accompanied by a smaller text block featuring the translated version. Conversely, when the recipient replies in a foreign language, the Messages app automatically displays the English (or chosen primary language) translation directly beneath the original text. A small drop-down menu situated above the text input field allows users to quickly swap languages or pause the translation service if they wish to continue the conversation in a single language.

Ecosystem-Wide Translation Integration

The translation capabilities of Apple Intelligence extend far beyond the Messages app, creating a cohesive experience across various forms of media and communication.

FaceTime and Live Captions

Translation has also been integrated into FaceTime. By accessing the "Live Captions" feature during a video call, users can see real-time transcriptions of what the other person is saying. When combined with Apple Intelligence, these captions can be translated on the fly, allowing two people who do not share a common language to hold a video conference with minimal friction.

Phone App and Live Translation

In a move that mirrors features seen in Samsung’s Galaxy AI and Google’s Pixel series, Apple has introduced Live Translation for standard voice calls. During an active call, users can tap a "Live Translation" button. The system then provides an audio translation of the speaker’s words while displaying a transcript on the screen. This is particularly useful for business transactions or emergency services where clear communication is vital.

AirPods Integration

For users with AirPods Pro (2nd generation or later) or AirPods 4 with Active Noise Cancellation, the "Live" translation feature offers an augmented reality audio experience. When activated through the connected iPhone, the AirPods can play back translated audio of a person speaking in the user’s immediate physical vicinity. This effectively turns the iPhone and AirPods combo into a "universal translator" for face-to-face interactions.

The Standalone Translate App and Camera Mode

The original Translate app remains a core component of the OS, serving as the central hub for language management. Its "Camera" mode allows users to point their device at physical signs, menus, or documents to see an overlay of translated text. The "Conversation" mode within the app provides a split-screen interface for two people to speak into the device alternately, with the phone speaking the translations aloud.

Privacy and Data Security Standards

One of the primary concerns regarding AI-driven translation is the privacy of the content being processed. Apple has addressed this by ensuring that all language processing for the Messages app is conducted on-device. When a user selects a language for translation, the iPhone downloads a specific "on-device model" for that language.

According to technical briefs from Apple, these models are optimized to run on the Neural Engine without sending data to external servers. This distinguishes Apple’s approach from many competitors who rely on cloud-based Large Language Models (LLMs) to handle complex translations. By keeping the data local, Apple ensures that personal conversations, financial details shared in texts, and private business communications are never exposed to the risks associated with cloud data breaches.

Comparative Market Analysis and Implications

The introduction of integrated translation in iOS is a direct response to the competitive pressure from the Android ecosystem. Google’s Pixel devices have long featured "Live Translate," which operates across various messaging apps including WhatsApp and Instagram. Similarly, Samsung’s "Galaxy AI," introduced with the S24 series, prioritized real-time call translation as a flagship feature.

Industry analysts suggest that Apple’s entry into this space is timed to coincide with the stabilization of generative AI technologies. By waiting to integrate these tools until they could be performed on-device, Apple maintains its brand identity as a privacy-first company while closing the feature gap with its rivals.

The implications for global commerce and education are significant. For small businesses, the ability to communicate with international suppliers or customers via standard iMessage without the need for a human translator lowers the barrier to entry for global trade. In education, the feature serves as a "passive learning" tool. As noted by digital creators like Taryn Arnold, seeing translations side-by-side with familiar conversational text helps users internalize vocabulary and syntax in a natural context, far removed from the rote memorization of traditional classroom settings.

Future Outlook and Limitations

Despite the significant advancements, the technology is not without its limitations. Machine translation still struggles with heavy regional dialects, slang, and cultural nuances that do not have direct equivalents in other languages. Furthermore, the hardware requirement excludes a vast majority of the current iPhone install base, potentially creating a "digital divide" between users with the latest premium hardware and those with older models.

Moving forward, it is expected that Apple will expand the number of supported languages and improve the contextual awareness of the translations. As the A-series chips continue to evolve, the complexity of the on-device models will likely increase, allowing for more nuanced and grammatically perfect translations. For now, the integration of real-time translation into the Messages app marks a milestone in making the iPhone not just a tool for communication, but a bridge between the world’s diverse linguistic landscapes.