NodeLLM 1.16 Unveiled: Advancing Production-Grade AI with Precision Control, Multimodal Capabilities, and Robust Self-Correction.

The release of NodeLLM 1.16 marks a pivotal moment in the ongoing evolution of infrastructure for artificial intelligence applications, signaling a decisive shift from foundational integration to sophisticated, production-grade operational capabilities. This latest iteration, developed by the NodeLLM team, moves beyond the basic functionalities of earlier versions, which primarily focused on initial model integration and fundamental safety protocols, to introduce what its creators describe as "surgical control" and "multimodal parity." This strategic enhancement directly addresses the escalating complexity of agentic AI workflows, where the ability to meticulously guide model behavior and manage operational failures with grace is no longer a luxury but a fundamental requirement differentiating experimental prototypes from deployable, robust tools.

The Evolving Landscape of AI Development and the Need for Robust Infrastructure

The past few years have witnessed an unprecedented acceleration in AI adoption, with large language models (LLMs) and multimodal AI becoming central to innovation across industries. From automating customer service to generating creative content and analyzing complex data sets, AI’s footprint is expanding rapidly. However, this explosive growth has also exposed critical challenges for developers: the need for seamless integration of diverse AI models, the demand for predictable and reliable execution, and the increasing complexity of building intelligent agents that can interact with the real world through tools. Many existing frameworks, while capable of basic interactions, often fall short when it comes to the granular control, error handling, and multimodal capabilities essential for high-stakes production environments. NodeLLM, since its inception, has aimed to bridge this gap, offering a unified, developer-friendly interface to a multitude of AI providers. Version 1.16 represents a significant leap forward in realizing this vision, offering developers the tools to craft more resilient, precise, and versatile AI applications.

Surgical Image Manipulation: Beyond Basic Generation

A cornerstone of the 1.16 release is its dramatically enhanced support for high-fidelity image editing and manipulation. This capability transcends the more common text-to-image generation, propelling NodeLLM into the advanced realms of in-painting, masking, and variations. These features are critical for applications demanding intricate control over visual content, such as graphic design automation, personalized marketing collateral generation, and sophisticated content moderation.

In-painting, for instance, allows AI models to intelligently fill in missing or unwanted parts of an image, inferring context from the surrounding pixels. This is invaluable for tasks like object removal, image restoration, or seamless asset integration. Masking, on the other hand, enables developers to define specific regions of an image that an AI should focus on, ensuring that edits are confined and precise. This level of targeted manipulation prevents unintended alterations and ensures the AI’s creative output aligns perfectly with the developer’s intent. Image variations provide the ability to generate multiple stylistic or compositional alternatives from a single source image, offering creative flexibility without requiring repeated detailed prompts.

Technically, NodeLLM 1.16 simplifies these complex operations through its paint() method. For developers leveraging OpenAI providers, this method intelligently routes requests to the specialized /v1/images/edits endpoint, utilizing the gpt-image-1 model (powered by DALL-E 2). DALL-E 2 has consistently remained a benchmark for image manipulation tasks, known for its ability to understand and execute nuanced visual instructions. The provision of images and mask parameters within the paint() method, as illustrated in the example, demonstrates a streamlined approach to sophisticated image editing:

const llm = createLLM( provider: "openai" );

// Modify an existing logo using a mask

const response = await llm.paint("Add a futuristic robot head to the logo",

model: "gpt-image-1",

images: ["logo.png"],

mask: "logo-mask.png",

size: "1024x1024"

);

await response.save("edited-logo.png");Furthermore, the update introduces robust image variations and comprehensive asset support. Developers can now generate visual variations of a source image without needing a new text prompt, fostering iterative design processes. The seamless handling of base64 and URL assets by the underlying BinaryUtils layer is a significant quality-of-life improvement. This utility automatically manages the conversion of image data into provider-standard multipart formats, abstracting away the complexities of binary boundaries and MIME types. This means developers can focus on the creative aspects of their applications rather than wrestling with data formatting challenges, accelerating development cycles and reducing potential integration errors.

Precision Tool Orchestration: Guiding AI Agents with Finesse

The proliferation of "agentic workflows," where AI models are tasked with complex, multi-step operations involving external tools (such as databases, APIs, or specialized computational modules), has highlighted a critical need for precision in tool orchestration. NodeLLM 1.16 directly addresses this with the introduction of choice and calls directives, offering unprecedented control over how and when an AI agent interacts with its available tools.

The tool_choice directive, now normalized across all major providers supported by NodeLLM (including Anthropic, Gemini, Bedrock, and Mistral), empowers developers to mandate or explicitly suggest the use of specific tools. This feature is invaluable in scenarios where an AI agent needs to perform a particular action, or where a developer wants to guide the agent towards a specific pathway. For instance, in a customer service bot, one might force the use of a "lookup_order_status" tool when certain keywords are detected, ensuring a direct and efficient resolution path. This level of explicit control minimizes ambiguity and enhances the predictability of agent behavior, which is crucial for building reliable, production-ready systems.

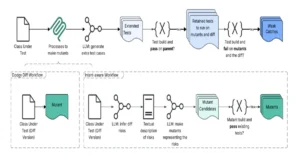

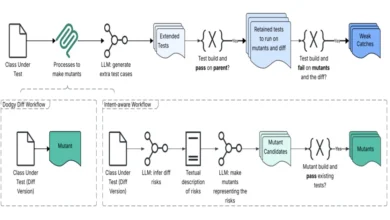

Beyond simply choosing a tool, the execution sequence of tool calls is equally vital. Modern AI models, in an effort to be efficient, often attempt to perform multiple tool calls in parallel. While this can be advantageous in some contexts, it frequently leads to what is colloquially termed "parallel hallucinations" – situations where subsequent tool calls are made before the output of earlier, dependent calls is available. This can result in errors, incorrect assumptions, and ultimately, failed workflows.

NodeLLM 1.16 introduces calls: 'one' to directly combat this challenge. By setting this directive, developers can force the model to proceed sequentially, executing one tool call at a time and awaiting its outcome before contemplating the next action. This ensures a stable and predictable execution flow, particularly for workflows where dependencies between tool calls are inherent. Consider an AI agent designed to process a user request involving multiple steps: first, retrieve user preferences from a database; second, based on those preferences, query an external service for relevant data; and third, update a user profile. If these steps are executed in parallel without proper sequencing, the second or third step might fail due to missing data from the preceding steps. The calls: 'one' directive ensures that each step completes successfully before the next is initiated, providing a robust foundation for complex, interdependent tasks.

const chat = llm.chat("gpt-4o");

// Force a specific tool and disable parallel calls for reliability

const response = await chat.ask("What is the temperature in London?",

choice: "get_weather",

calls: "one"

);This precision tool orchestration capability significantly enhances the reliability and debuggability of AI agents, moving them closer to human-like reasoning in their operational execution.

AI Self-Correction for Tool Failures: Towards More Resilient Agents

Building on the foundation of the Self-Correction middleware introduced in NodeLLM v1.15, version 1.16 brings significant hardening to the tool execution pipeline, making AI agents far more resilient and capable of autonomous error recovery. This enhancement is a game-changer for production environments, where unexpected errors can lead to application crashes or degraded user experiences.

One of the most common issues in agentic workflows is an AI model attempting to call a non-existent or incorrectly named tool. In previous iterations, such an attempt might have resulted in an application-level exception, requiring external intervention. NodeLLM 1.16 now intelligently intercepts these errors. If a model proposes a tool call that is not defined, NodeLLM catches the error internally and returns a descriptive "unavailable tool" response directly to the model, critically, along with a list of all valid tools it can use. This immediate feedback loop allows the model to instantly self-correct its proposal, often by selecting a correct tool from the provided list, without triggering an application-level crash or requiring human oversight. This proactive error handling drastically improves the robustness and autonomy of AI agents.

Similarly, the challenge of invalid arguments passed to tools has been addressed. When an AI model generates arguments for a tool call that fail Zod validation – a popular schema declaration and validation library – NodeLLM now captures these validation errors. Instead of crashing, the specific "Invalid Arguments" results are fed back to the model. This detailed feedback empowers the agent to understand why its arguments were invalid and allows it to refine its input parameters, essentially debugging its own mistakes in real-time.

This comprehensive self-correction mechanism is a crucial step towards creating truly intelligent and reliable AI agents. It reduces the operational overhead of managing AI systems, minimizes downtime due to unforeseen errors, and allows agents to learn and adapt from their mistakes within the context of their execution. Such capabilities are paramount for mission-critical applications where uninterrupted service and reliable performance are non-negotiable.

Advanced Transcription & Diarization: Elevating Audio Intelligence

The capabilities of NodeLLM’s audio support have also received a substantial upgrade in version 1.16, particularly enhancing its Transcription interface. This release introduces support for word-level timestamps and significantly improved diarization, features that are indispensable for a wide array of sophisticated voice AI applications.

Word-level timestamps provide precise timing information for each spoken word within a transcribed audio segment. This granularity is critical for applications like:

- Media Production: Automatically generating subtitles or captions with perfect synchronization for video editing and accessibility.

- Language Learning: Providing detailed feedback to learners on pronunciation and timing.

- Forensic Analysis: Pinpointing exact moments of speech in legal or investigative contexts.

- Content Search: Enabling highly accurate search within audio and video content by matching specific words to their exact temporal locations.

Enhanced diarization, or speaker tracking, allows NodeLLM to accurately identify and differentiate between multiple speakers in an audio recording. This is a complex challenge in audio processing, but its accurate implementation unlocks significant value for:

- Meeting Summarization: Automatically generating structured notes that attribute statements to specific participants, providing clearer context and action items.

- Customer Service Analytics: Analyzing multi-party conversations to understand agent-customer interactions, identify pain points, and assess service quality more effectively.

- Interview Transcription: Producing transcripts that clearly delineate who said what, which is essential for qualitative research and journalism.

- Accessibility: Improving the clarity and utility of transcripts for individuals with hearing impairments by distinguishing speakers.

These advancements in audio processing solidify NodeLLM’s position as a comprehensive platform for multimodal AI. As voice interfaces and audio analytics continue to gain prominence, the ability to extract such detailed and structured information from audio streams becomes a competitive differentiator, enabling developers to build more intelligent, context-aware, and user-friendly applications.

Broader Implications and Market Impact

NodeLLM 1.16 is more than just a software update; it represents a significant push towards democratizing the development of advanced, production-ready AI applications. By abstracting away much of the underlying complexity associated with multimodal models, tool orchestration, and error handling, NodeLLM empowers a broader spectrum of developers to build sophisticated AI systems without needing deep expertise in every niche of AI engineering. This focus on developer experience and robust infrastructure is particularly timely, given the rapid expansion of AI into mission-critical business processes.

The emphasis on "surgical control" and "multimodal parity" aligns perfectly with current industry trends. As AI models become more capable, the demand for precise, predictable, and reliable behavior intensifies. Industries like finance, healthcare, and advanced manufacturing, where errors can have severe consequences, require AI systems that are not only intelligent but also auditable and controllable. NodeLLM 1.16 provides the architectural underpinnings for such systems.

Industry analysts suggest that frameworks like NodeLLM, which prioritize resilience and fine-grained control, are crucial for the next phase of AI adoption. "The ability for an AI agent to self-correct and for developers to dictate tool usage precisely will unlock a new generation of reliable AI applications," commented Dr. Anya Sharma, a lead AI infrastructure analyst at TechInsights Group. "This move by NodeLLM addresses critical pain points that have hindered the deployment of complex AI agents in enterprise settings."

The release also underscores NodeLLM’s commitment to fostering a vibrant developer ecosystem. By providing a unified API for various AI providers and handling intricate technical details like binary asset conversion and standardized tool directives, NodeLLM reduces the cognitive load on developers, allowing them to focus on innovation and application logic. This acceleration of development cycles is a significant economic advantage in a fast-moving market.

Looking ahead, the capabilities introduced in NodeLLM 1.16 lay the groundwork for even more advanced AI applications. We can anticipate the emergence of highly intelligent virtual assistants capable of sophisticated image editing, autonomous agents that can reliably execute multi-step business processes with minimal human supervision, and analytical platforms that derive deep insights from complex audio data. The continuous hardening of error handling and the emphasis on predictable agent behavior will also be instrumental in building trust in AI systems, a critical factor for widespread adoption.

Getting Started and Developer Resources

NodeLLM 1.16.0 is undeniably a "Big Release" that significantly elevates the standard for AI infrastructure, bringing it closer to the robustness and control expected of modern production applications. Developers eager to leverage these new capabilities can integrate the update via npm:

npm install @node-llm/[email protected]For a comprehensive understanding of all architectural refinements, bug fixes, and detailed changes, developers are encouraged to consult the official NodeLLM Commit History and the comprehensive CHANGELOG. These resources provide granular insights into the technical evolution and stability enhancements delivered with this significant release. The NodeLLM team continues its dedication to providing cutting-edge tools that empower developers to build the next generation of intelligent applications.