AI-Powered Synthetic Users Revolutionize Open-Source Documentation Testing

The critical challenge of maintaining accurate and functional documentation for early-stage open-source projects has been significantly addressed by a novel approach pioneered by the Drasi team at Microsoft Azure’s Office of the Chief Technology Officer. Faced with the daunting task of ensuring their comprehensive tutorials remained up-to-date amidst rapid code development, the four-engineer team has leveraged advanced AI, specifically GitHub Copilot CLI and Dev Containers, to transform documentation testing into a proactive monitoring system. This innovative strategy, born out of a critical incident in late 2025, not only safeguards the user experience for projects like Drasi, a CNCF sandbox project designed for real-time data change detection and reaction, but also offers a scalable solution for the broader open-source community.

The impetus for this paradigm shift stemmed from a significant disruption in late 2025 when GitHub’s Dev Container infrastructure underwent an update, raising the minimum required Docker version. This seemingly minor change had a cascading effect, breaking the Docker daemon connection and rendering every single Drasi tutorial non-functional. The team, which relied heavily on manual testing for its documentation, was initially unaware of the full extent of the damage. This oversight meant that any developer attempting to engage with Drasi during that period would have encountered immediate roadblocks, leading to frustration and likely abandonment of the project. This experience underscored a fundamental gap: the disconnect between the rapid pace of software development and the slower, often manual, process of verifying documentation integrity.

The Drasi team’s realization was profound: "with advanced AI coding assistants, documentation testing can be converted to a monitoring problem." This insight paved the way for the development of AI agents acting as "synthetic users," meticulously simulating the journey of a new developer encountering the project for the first time.

The Pervasive Problem: Why Documentation Breaks

Documentation, particularly for complex software like Drasi, is susceptible to breaking due to two primary, often insidious, reasons: the "curse of knowledge" and "silent drift."

The Curse of Knowledge: Implicit Context Overload

Experienced developers, deeply embedded in the project’s intricacies, often inadvertently inject implicit context into their documentation. Phrases like "wait for the query to bootstrap" carry a wealth of unstated knowledge for seasoned team members – they know to execute drasi list query and monitor for the "Running" status, or perhaps even more efficiently, use the drasi wait command. However, for a newcomer, or even an AI agent, this instruction is ambiguous. The "how" remains elusive, with documentation often focusing solely on the "what," leaving new users stranded in a sea of technical jargon without a clear path forward. This "curse of knowledge" creates a significant barrier to entry, alienating potential contributors and users.

Silent Drift: The Unseen Erosion of Accuracy

Unlike code, which typically fails loudly when errors occur, documentation often succumbs to "silent drift." When a configuration file is renamed in the codebase, build processes immediately flag the error. However, if documentation continues to reference the old filename, no such immediate alarm is raised. This discrepancy accumulates subtly, creating a growing chasm between the documented instructions and the actual functioning of the software. This drift is particularly pronounced for tutorials that involve setting up sandbox environments using tools like Docker, k3d, and sample databases. Any alteration in upstream dependencies – a deprecated flag, a version bump, or a change in default behavior – can silently break these tutorials, leading to user confusion and a loss of confidence in the project’s stability.

The Innovative Solution: Agents as Synthetic Users

To combat these pervasive issues, the Drasi team reframed documentation testing as a simulation problem. They developed an AI agent designed to embody the persona of a "synthetic new user." This agent possesses three crucial characteristics:

- Literal Interpretation: The agent adheres strictly to the documented instructions, mirroring the behavior of a novice user who lacks prior context.

- Comprehensive Environment Interaction: It can execute terminal commands, write files, and interact with web interfaces, simulating a full developer workflow.

- Observational Capability: The agent can capture visual and textual output, providing a detailed record of its experience.

The Technological Stack: GitHub Copilot CLI and Dev Containers

The implementation of this solution hinges on a robust technological stack, integrating GitHub Actions, Dev Containers, Playwright, and the GitHub Copilot CLI. The core requirement was to replicate the exact user environment. Since human users often engage with Drasi via GitHub Codespaces using specific Dev Containers, the testing infrastructure had to mirror this precisely.

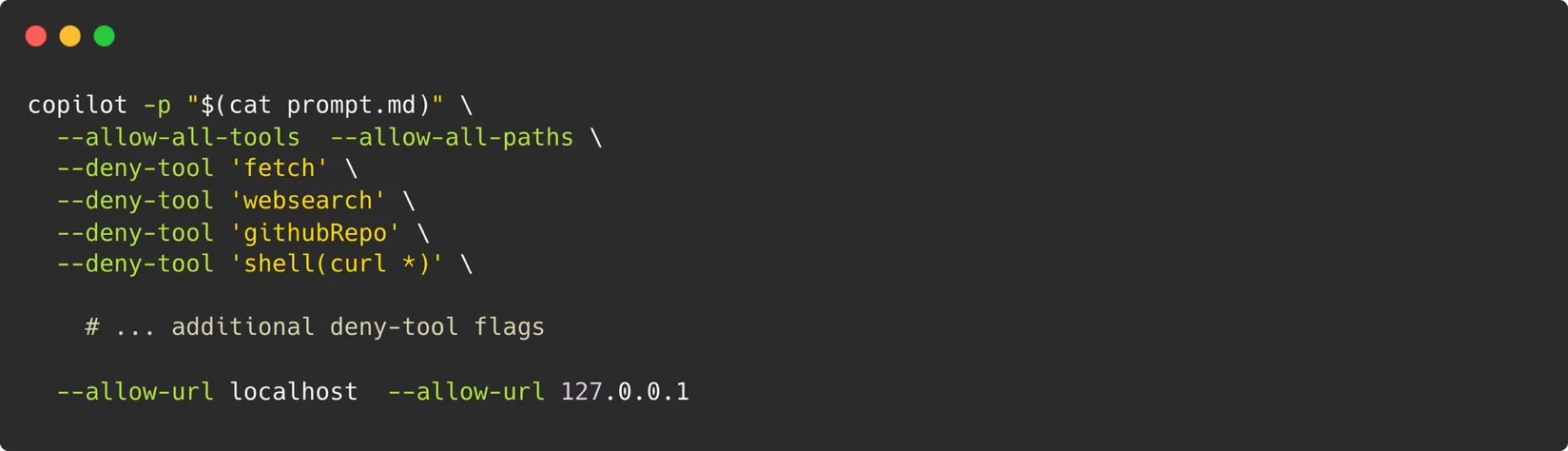

The architecture deploys the Copilot CLI within a Dev Container. A specialized system prompt, meticulously crafted and available for review, guides the agent’s actions. This prompt equips the agent with the necessary permissions and tools to execute terminal commands, manipulate files, and even run browser scripts using Playwright. Playwright’s integration is vital for enabling the agent to open webpages, interact with them as a human would, and crucially, take screenshots for comparison against expected documentation outputs.

The Architectural Framework

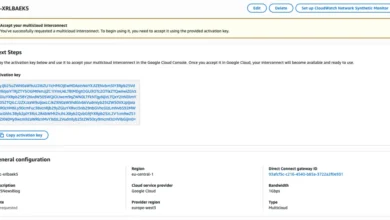

At the heart of the system lies a sophisticated architecture orchestrated by GitHub Actions. Within the isolated environment of a Dev Container, the Copilot CLI is invoked with a detailed system prompt. This prompt instructs the AI agent to perform the tutorial steps, critically evaluating each stage. The agent’s capabilities are carefully controlled: it can execute terminal commands, write files, and interact with web UIs. To ensure a secure and controlled testing environment, the agent’s access to external resources is strictly limited. Tools like ‘fetch’ and ‘websearch’ are denied, while access to localhost and 127.0.0.1 is permitted, creating a sandbox that closely mimics a user’s local development setup without exposing the broader network.

A Robust Security Model: The Container as the Boundary

Security is paramount in this automated testing framework. The team adopted a "container as the boundary" principle. Rather than attempting to micromanage individual command permissions, which is a complex and often futile endeavor when agents need to run arbitrary scripts, the entire Dev Container is treated as an isolated sandbox. Strict controls are enforced on what crosses the container’s boundaries. This includes:

- Restricted Network Access: No outbound network access beyond localhost is permitted.

- Limited Permissions: A Personal Access Token (PAT) is granted only "Copilot Requests" permission, minimizing potential exposure.

- Ephemeral Environments: Containers are destroyed after each test run, ensuring a clean state and preventing persistent vulnerabilities.

- Maintainer Approval Gate: Workflows are triggered only after maintainer approval, adding an essential human oversight layer, especially for pull requests.

Navigating Non-Determinism: Strategies for Reliability

A significant hurdle in AI-driven testing is the inherent non-determinism of Large Language Models (LLMs). These probabilistic models can sometimes lead to inconsistent agent behavior, such as retrying commands or abandoning tasks. The Drasi team addressed this challenge through a multi-pronged strategy:

- Multi-Stage Retry with Model Escalation: The system employs a tiered retry mechanism. If an initial attempt with a primary model (e.g., Gemini-Pro) fails, it escalates to a more powerful model (e.g., Claude Opus) for subsequent retries, increasing the likelihood of successful execution.

- Semantic Comparison for Screenshots: Instead of relying on exact pixel-matching, which can be brittle, the system utilizes semantic comparison for screenshots. This allows for more robust verification, accommodating minor visual variations.

- Core-Data Field Verification: The agent verifies core data fields rather than volatile values, further enhancing the reliability of test outcomes.

- Prompt Engineering for Control: Tight constraints are embedded within the agent’s prompts to prevent it from embarking on extended debugging sessions. Directives are included to control the structure of the final report, and "skip directives" allow the agent to bypass optional tutorial sections, such as external service setups, streamlining the testing process.

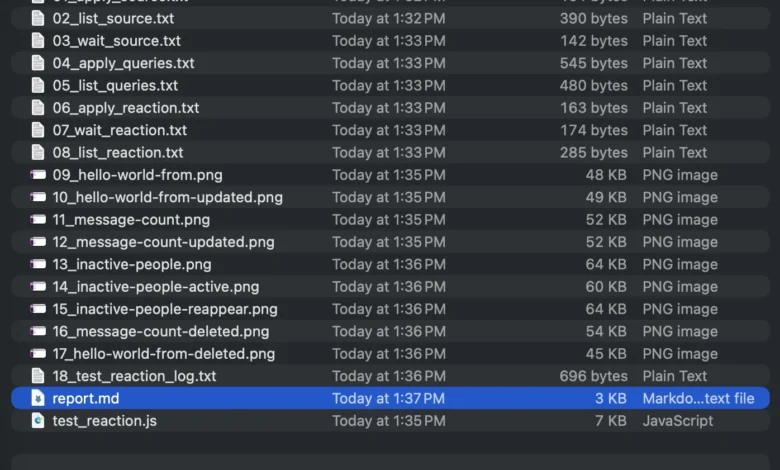

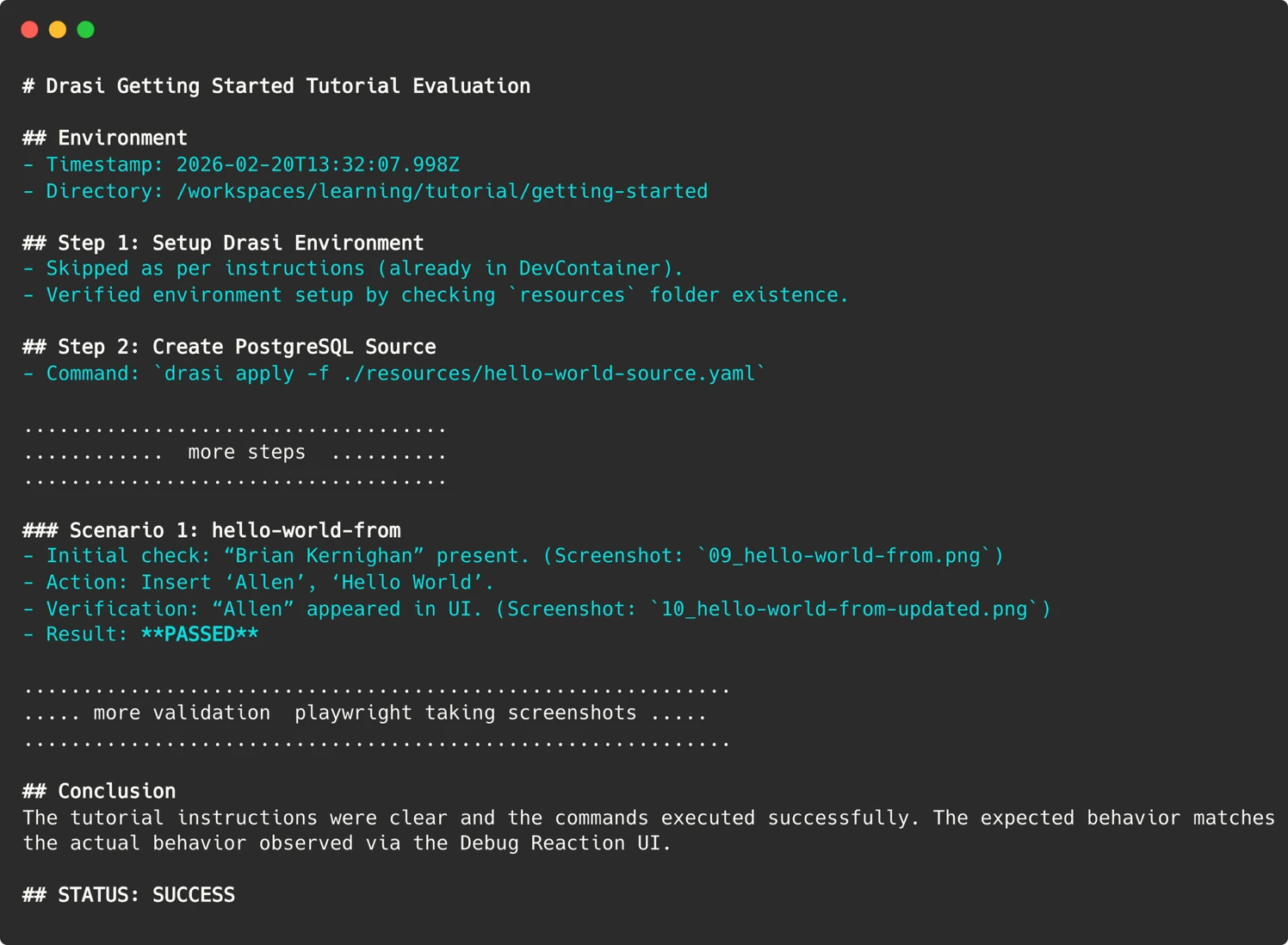

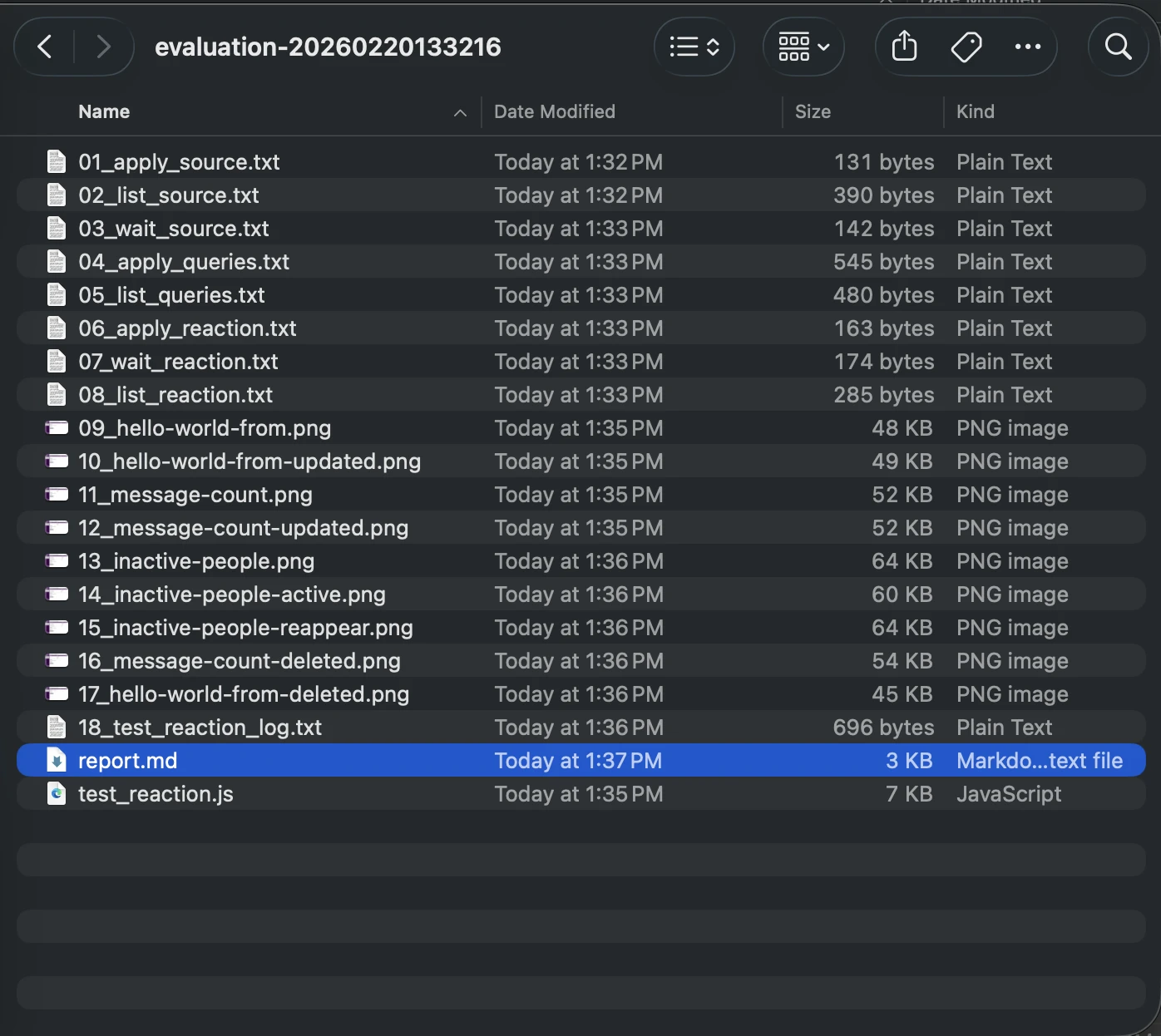

Artifacts for Debugging: Unraveling Failures

When an automated test run fails, understanding the root cause is critical. Since the AI agent operates within transient containers, traditional SSH access for debugging is impossible. To overcome this, the agent meticulously preserves evidence from every run. This includes:

- Screenshots of Web UIs: Visual records of the agent’s interaction with web interfaces.

- Terminal Output: Logs of critical commands executed within the container.

- Markdown Report: A detailed narrative explaining the agent’s reasoning and findings.

These artifacts are uploaded to the GitHub Action run summary, enabling the team to "time travel" back to the exact moment of failure, observing the agent’s experience firsthand. This comprehensive logging transforms debugging from a guessing game into a forensic investigation.

Parsing the Agent’s Report: Bridging AI Output and CI Expectations

Deriving a definitive "Pass/Fail" signal from an LLM’s nuanced output can be challenging. Agents might generate lengthy, qualitative conclusions. To translate these into actionable results for a CI/CD pipeline, the Drasi team employed precise prompt engineering. They explicitly instructed the agent to provide a clear, machine-readable indicator of success or failure. In the GitHub Action, a simple grep command then identifies this specific string, allowing the workflow to set the appropriate exit code. This technique effectively bridges the gap between the probabilistic nature of LLM outputs and the binary expectations of CI systems.

Automation and Continuous Integration

The integration of this AI-driven testing framework has led to a fully automated workflow. A scheduled GitHub Action runs weekly, evaluating all Drasi tutorials in parallel. Each tutorial is processed within its own isolated sandbox container, with the AI agent providing a fresh, synthetic user perspective. If any tutorial evaluation fails, the workflow is configured to automatically file an issue on the Drasi GitHub repository, ensuring prompt attention.

This workflow can also be optionally triggered on pull requests. However, to mitigate potential security risks, a maintainer approval mechanism is in place, and a pull_request_target trigger ensures that even for external contributions, the workflow executed is the one residing in the main branch. The necessity of a PAT token for the Copilot CLI is managed through repository secrets, with maintainer approval serving as a critical safeguard against potential leaks, except for the automated weekly runs on the main branch.

Discoveries: Bugs That Truly Matter

Since implementing this automated synthetic user system, the Drasi team has conducted over 200 "synthetic user" sessions. This rigorous testing has unearthed 18 distinct issues, ranging from critical environmental configuration problems to subtle documentation ambiguities. The impact of these findings extends beyond the AI agent; by addressing these issues, the team has significantly improved the documentation and user experience for all human developers engaging with Drasi.

AI as a Force Multiplier: Expanding Capabilities Without Expanding Headcount

The implementation of AI-powered synthetic users represents a powerful force multiplier for small teams. The Drasi team, comprised of just four engineers, acknowledges that replicating the extensive testing capabilities of their automated system—running multiple tutorials across fresh environments weekly—would necessitate a dedicated QA resource or a substantial budget for manual testing, neither of which is feasible for their team size. By deploying these synthetic users, they have effectively gained a tireless QA engineer who operates continuously, nights, weekends, and holidays.

The Drasi project’s tutorials are now validated weekly by these AI-driven synthetic users, ensuring a consistently high-quality onboarding experience. Developers are encouraged to try the "Getting Started" guide firsthand to witness the results. For projects grappling with similar documentation drift challenges, the Drasi team advocates for exploring GitHub Copilot CLI not merely as a coding assistant, but as a versatile agent capable of executing defined goals within controlled environments, thereby offloading critical but time-consuming tasks that human resources may not be able to accommodate. This innovative approach underscores the transformative potential of AI in bolstering the reliability and accessibility of open-source software.