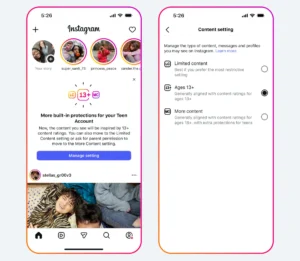

Instagram Expands 13 Plus Content Ratings and Limited Content Settings Globally to Enhance Teen Safety Standards

Instagram has officially announced the international expansion of its age-appropriate content ratings and the "Limited Content" setting, marking a significant shift in how the platform manages the digital experiences of its youngest users. This global rollout builds upon the initial implementation seen in the United Kingdom, United States, Australia, and Canada in October 2025. Under the new framework, teenage users under the age of 18 will be automatically transitioned into a curated 13+ content environment by default. This setting is designed to mirror the content standards of an age-appropriate movie, ensuring that the media consumed by adolescents is aligned with developmental milestones and parental expectations. Crucially, these protections are mandatory for minors; users under 18 will not be permitted to opt out of these restrictive settings without explicit verified permission from a parent or legal guardian.

The core philosophy behind this update is the alignment of social media moderation with established media standards that parents already understand. By adopting criteria similar to those used by the motion picture industry for PG-13 ratings, Instagram aims to provide a predictable and safer environment. While the platform acknowledges that the transition from static cinema to dynamic social media is complex, the goal is to ensure that suggestive content, strong language, and mature themes are kept to an absolute minimum. Meta, the parent company of Instagram, has emphasized that while no automated system can guarantee 100% efficacy, the integration of advanced artificial intelligence and refined policy guidelines represents a robust effort to prioritize teen safety over unrestricted engagement.

Strategic Alignment with Global Media Standards

The decision to benchmark Instagram’s content guidelines against independent movie rating standards is a strategic move to bridge the gap between digital content and traditional media oversight. For decades, parents have relied on ratings like PG-13 to gauge the suitability of films for their children. Instagram’s updated 13+ setting seeks to replicate this experience. In practice, this means that while a teen might occasionally encounter mild suggestive themes or "strong language" typical of a teenage-oriented film, the platform’s algorithms will proactively filter out content that exceeds these boundaries.

This alignment serves two purposes. First, it provides a familiar vocabulary for parents who may feel overwhelmed by the rapidly evolving nature of internet trends. Second, it creates a standardized baseline for content creators. Influencers and brands must now recognize that content reaching teen audiences will be subject to a "13+" filter, which is significantly more stringent than the standard community guidelines applied to adult accounts. This shift reflects a broader industry trend where social media platforms are no longer viewed as "neutral pipes" but as active curators of age-specific environments.

Detailed Content Restrictions and Policy Enhancements

The updated 13+ setting goes beyond previous iterations of Instagram’s safety tools. While the platform has long prohibited the recommendation of sexually suggestive content, graphic violence, and the sale of regulated goods like tobacco or alcohol to minors, the new policies expand into more nuanced categories of potential harm.

One of the primary focuses of the new policy is the restriction of "risky stunts." In recent years, viral challenges—often involving dangerous physical feats or health-threatening activities—have proliferated on social media. Instagram’s refined technology will now proactively identify and hide such content from teen feeds. Furthermore, the platform is cracking down on "marijuana paraphernalia" and other content that, while perhaps legal for adults in certain jurisdictions, is deemed inappropriate for adolescent consumption.

Strong language is another area of focus. While colloquialisms are common in digital communication, the 13+ setting will filter out egregious profanity and verbal abuse. By refining its "Sensitive Content Control" technology, Instagram is moving toward a more sophisticated understanding of context, attempting to distinguish between a teenager using slang and content that promotes a culture of aggression or adult-oriented themes.

The Introduction of "Limited Content" for Maximum Protection

Recognizing that every household has different thresholds for what is considered "appropriate," Instagram is introducing a secondary, even more restrictive tier called the "Limited Content" setting. This feature is specifically designed for parents who believe that even the 13+ movie-style rating may be too mature for their children.

The "Limited Content" setting functions as a high-security mode for Teen Accounts. When activated, it applies the strictest possible filters to the Explore page, Reels, and Feed, removing virtually all content that could be considered borderline or sensitive. However, the most significant change within this setting is the total removal of social interaction features. Teens in "Limited Content" mode will lose the ability to see, leave, or receive comments on posts.

This move is a direct response to feedback from child psychologists and safety advocates who have highlighted the role of comment sections in cyberbullying and the development of social anxiety. By removing the feedback loop of the comment section, the "Limited Content" setting transforms Instagram into a more passive, consumption-only experience, effectively neutralizing the platforms’ most common avenues for peer-to-peer harassment.

A Chronology of Meta’s Teen Safety Evolution

The international expansion of these settings is the latest chapter in a multi-year effort by Meta to address growing concerns regarding adolescent mental health and online safety. The timeline of these developments reveals a clear trajectory toward increased platform accountability:

- September 2021: Following internal research leaks and public scrutiny, Meta paused the development of "Instagram Kids," a version of the app intended for children under 13.

- March 2022: Instagram introduced "Parental Supervision" tools, allowing parents to see how much time their teens spent on the app and set limits.

- January 2024: Meta announced new measures to hide content related to self-harm and eating disorders from teen accounts, regardless of whether the teen was following the accounts posting such content.

- September 2024: The "Teen Accounts" initiative was launched, making many safety settings (such as private accounts and messaging restrictions) automatic for users under 18.

- October 2025: The 13+ content rating and Limited Content settings were rolled out in the UK, US, Australia, and Canada.

- Present Day: These features are now being expanded internationally, covering all global markets where Instagram operates.

This chronology demonstrates that the current update is not an isolated event but part of a systematic overhaul of the platform’s architecture for minors.

Supporting Data and the Urgency of Reform

The impetus for these changes is supported by a growing body of data concerning teen social media usage. According to recent surveys by the Pew Research Center, nearly 95% of teens in developed markets have access to a smartphone, and over 60% report using Instagram daily. Furthermore, academic studies have frequently linked high levels of social media consumption with "upward social comparison," where teens compare their lives to curated, often unrealistic, digital portrayals, leading to decreased self-esteem.

Internal data from safety organizations suggests that the most vulnerable period for a teen online is between the ages of 13 and 15, as they transition from moderated children’s content to the open web. By implementing a default 13+ setting that requires parental bypass, Instagram is effectively creating a "learner’s permit" for the internet. Meta’s own metrics indicate that when safety settings are "opt-in," usage is low, but when they are "opt-out" (and restricted by parental controls), the protective impact is significantly higher.

Technological Infrastructure and Proactive Identification

The success of these global safety measures relies heavily on Meta’s underlying technology. To identify content that violates the 13+ guidelines, Instagram employs a combination of Large Language Models (LLMs) and computer vision. These systems are trained to recognize not just "banned" words, but the intent and context of a post.

For example, the AI must be able to distinguish between a documentary clip about the history of tobacco (which might be educational) and a Reel glamorizing "vaping" (which would be restricted). The platform has also improved its "proactive identification" capabilities, which allow the system to flag and hide content before it is even reported by a user. This is particularly vital for preventing the spread of viral challenges that can result in physical harm.

Meta has acknowledged the technical hurdles involved in global moderation, particularly regarding different languages and cultural nuances. The international expansion includes localized AI training to ensure that "strong language" and "suggestive content" are accurately identified across various dialects and regional contexts.

Regulatory Pressure and the Global Legal Landscape

The expansion of these features also coincides with a period of intense regulatory pressure. In the European Union, the Digital Services Act (DSA) mandates that "very large online platforms" (VLOPs) implement high levels of privacy, safety, and security for minors. Failure to comply can result in fines of up to 6% of global annual turnover.

In the United Kingdom, the Online Safety Act has placed a "duty of care" on tech companies to protect children from harmful content. Similarly, in the United States, various states have passed legislation requiring age verification and parental consent for social media use. By standardizing its 13+ and Limited Content settings globally, Meta is essentially creating a unified compliance framework that satisfies the strictest requirements of multiple jurisdictions simultaneously.

Reactions from Advocacy Groups and the Public

The reaction to Instagram’s latest update has been largely positive among parental advocacy groups, though some digital rights organizations remain cautious. Groups like "Common Sense Media" have praised the move toward default protections, noting that placing the burden of safety on the platform rather than the individual teen is a necessary step.

However, some privacy advocates have raised concerns about the "parental permission" aspect. They argue that in households where the parent-child relationship is strained, or where parents are not tech-savvy, these settings might inadvertently isolate teens from helpful communities or information. Furthermore, there is ongoing debate about the effectiveness of age-verification technology, as many teens find ways to circumvent age gates by misrepresenting their birth year during sign-up. In response, Instagram has continued to develop "age estimation" tools that analyze user behavior and account connections to verify that a user’s stated age matches their actual activity.

Broader Impact and the Future of Social Media

The international expansion of Instagram’s 13+ content rating signals a broader shift in the social media industry. For years, the prevailing model was "growth at all costs," with minimal friction for new users. Today, the industry is moving toward a "safety by design" model, where friction—in the form of age gates and parental controls—is seen as a necessary feature rather than a bug.

This change is likely to influence other platforms. As Instagram sets a high bar for teen protection, competitors like TikTok and Snapchat will face increased pressure from both regulators and users to implement similar movie-style rating systems. The long-term implication is a bifurcated internet: an open, less-regulated space for adults and a highly curated, moderated, and parentally-supervised "walled garden" for minors.

As Instagram continues to refine its algorithms and respond to feedback, the platform’s commitment to improving these systems over time will be tested. For now, the global rollout of 13+ ratings and Limited Content settings stands as one of the most comprehensive attempts by a major social media company to reconcile the benefits of digital connectivity with the fundamental need to protect the well-being of the next generation. Meta’s hope is that these updates will not only protect teens but also restore parental trust in a platform that has become a central part of modern adolescent life.